Worldline Labs is already in green AI

Introduction

In 2021, the International Energy Agency (IEA) published a Net-Zero Emissions (NZE) Roadmap [1] by 2050 which outlines a scenario that the IEA has built to comply with the Paris climate agreement and, from this report, we know that we have an imperative to reduce urgently our energy consumption. For instance, according to the NZE, the total energy supply must fall by 7% between 2020 and 2030 and remain at around this level to 2050. The energy resource regenerates much more slowly than the needs and at the same time the world’s population will still grow. Using energy efficiently and reducing energy consumption becomes a key concern.

Significant efficiencies can be achieved using a combination of technology and renewable energy. And for that, data science and Artificial Intelligence (AI) could be an important pillar. The whole network of energy production, transportation, and consumption can be monitored and orchestrated with the help of Data science and AI. AI can monitor and collect information in the form of numbers, text, images and videos. Information can then be analyzed by AI to manager energy usage and reduce it during peak hours for example. AI helps improve the industry and all the form of operational tasks. Using collected data, AI can predict future failures, choose the best next step actions and help for human’s planning and intervention. Thanks to AI, redundant or unnecessary tasks will be identified and eliminated. AI make social and industrial processes orderly and smooth, avoiding losses from time consuming, waste and system breakdown.

While AI could provide solutions to energy issues, data centers and large AI models need large computer infrastructures and consume energy during both solution development and operation. We should be careful that solutions do not become a part of problems themselves and consume as less as possible energy while maintaining the same level of a system’s performance. Therefore, working on green AI becomes crucial to measure the benefice vs. consumption of the technology and make them more efficient and environmentally friendly.

Larger and Larger No Environmental Concern Models

There is no doubt that AI is coming into everyone’s daily life: personal assistants, chatbots, translators, autopilots, fraud and risk management, identity and access management etc. While AI applications are impacting society largely and positively, problems of AI like security, ethics, and energy appear gradually. Among these, the energy problem is becoming particularly crucial in the current international context.

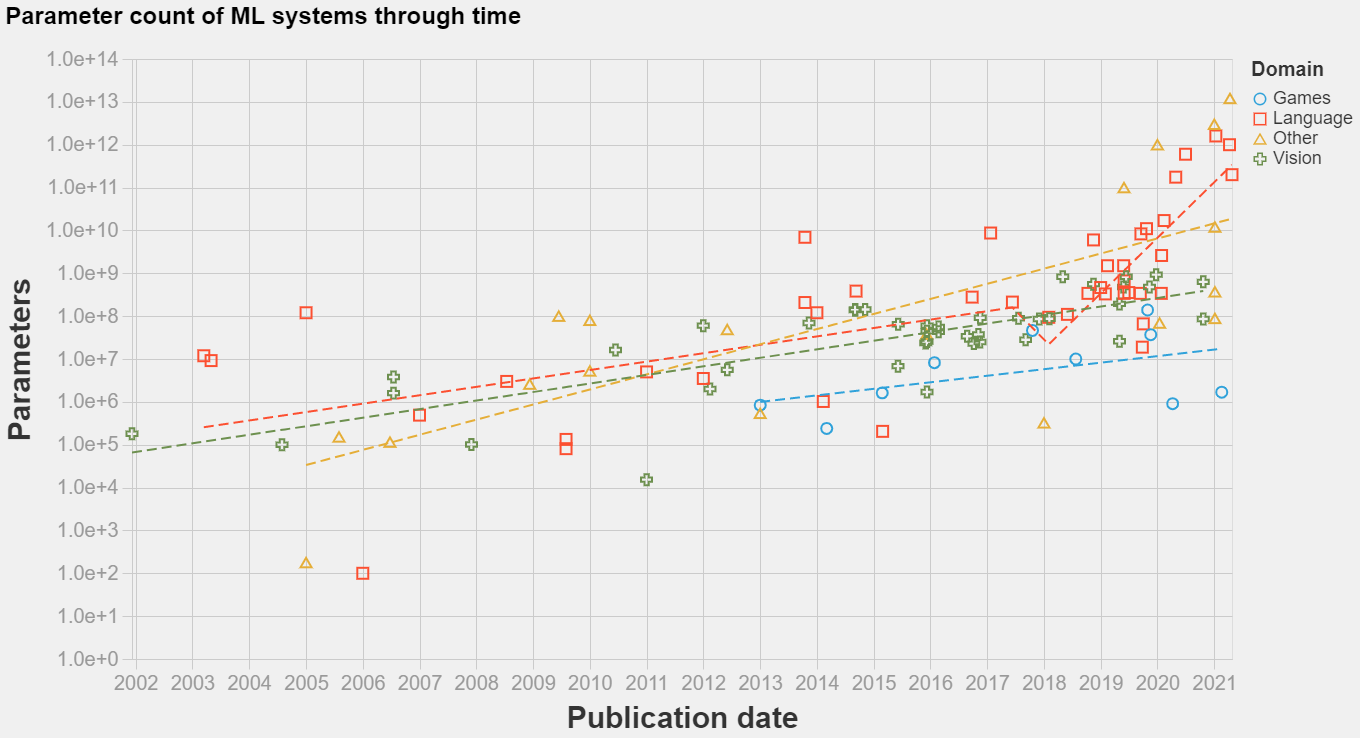

Figure 1. Evolution of model parameters through time

The most used AI models now are neural networks (aka deep learning). The training of a neural network includes a forward pass, where input is passed and an output is generated after processing the input. Then, the backward pass consists of updating the weights of the neural network using the errors received in the forward pass, via gradient descent algorithms. These forward and backward passes require a massive amount of matrix manipulations and the matrices that we need to exploit can be huge with large models. The training of a typical deep neural network repeats these passes until the model converges. The scale of AI models (number of parameters) increased explosively in the past few years. Figure 1 shows the increase of the number of parameters in various AI domains. Concretely, taking the language model as an example, the best performing model in 2019 (GPT-2) had around 1.9 billion parameters; the GPT-3 in 2020 extended this number to 175 billion; while the currently published PaLM model is three times larger than GPT-3 with 540 billion parameters.

Figure 2. Evolution of necessary computation power for machine learning models

Although the global energy consumption of the IT industry does not increase since 2010 [6]. With the explosion of the number of parameters in machine learning models, we should prevent the future risk of high energy consumption. According to some research [2], training a GPT-3 requires roughly 190,000 kWh electricity power, producing around 85,000 kg of CO2 equivalents if fully relying on fossil fuels. It is the same amount produced by a car driving 700,000 km, about twice the distance between Earth and the Moon. Moreover, the pursuit of larger models seems to never end. According to [3], as shown in the Figure 2 above, the computing power needed for deep learning has doubled every few months between 2012 and 2018, directly resulting in an increase estimated to 300,000X of the computing power. Applying such a model as GPT-3 on the cloud requires an instance equivalent to the Amazon p3dn.24xlarge [4] with 8 GPUs Tesla V100 (300Watt each).

However, an energy-efficient and environment-friendly AI roadmap becomes mandatory. The good news is, we were already on it.

From Red AI to Green AI: Worldline’s Good Practices and Suggestions

It is empirically shown that large-scale AI models enjoy various advantages, and one can foresee that the pursuit of giant AI models continues. Nonetheless, training such a model becomes unaffordable for most companies. Therefore, how to reuse the pre-trained large-scale models becomes an essential research topic. Namely, paradigms like transfer learning and prompt learning can adjust pre-trained models to various tasks at a fairly low energy cost. Such approaches partly re-train the large-scale white-box model on a small dataset to fit the business objective. All of these are built upon a vibrant open-source community. Furthermore, classical methods like model pruning and parameter weight reduction can lighten the burden of prediction, thus making the model more energy efficient. These methods reduce the complexity after training by identifying the weights of the model which have little impact on the prediction and by removing them.

At Worldline, we favor transfer learning and model pruning. In addition, we have set up good practices toward green AI throughout the whole pipeline for each AI project.

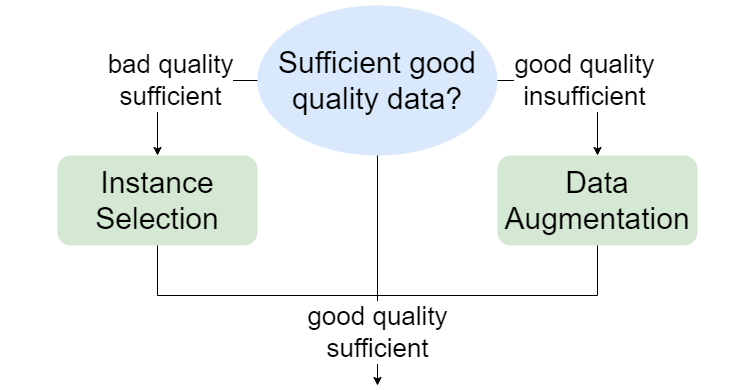

The first step concerns the data preparation (Figure 3). We must first ask ourselves if we have a lot of data in good quality. Note that a larger dataset does not always equal richer information. At the data preparation stage, we carefully investigate the data quality to ensure an efficient training process. Small but high-quality datasets are encouraged to reduce the time of energy-consuming computation.

Figure 3. Data preparation stage

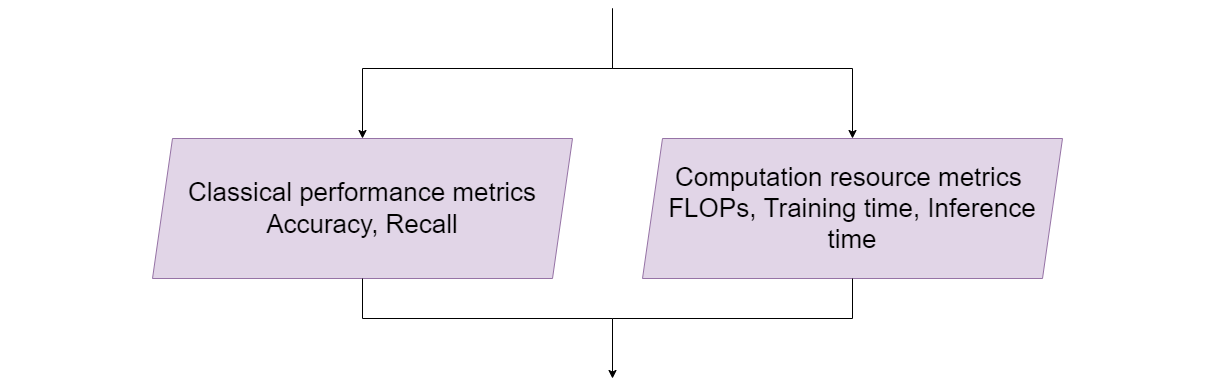

After the data preparation step, we have the model building step which requires the definition of a metric (Figure 4). During the metric selection stage, not only the prediction performance but also the computational burden is considered to evaluate a machine learning model (Figure 4). To assess the computational burden, we can evaluate the number of machine operations to be performed during the main modeling phases such as learning or inferences. For this, there are for example python package CodeCarbon which allows us to evaluate the energy consumption of a python script according to its context of use (e.g., type of hardware).

Figure 4. Metric selection stage

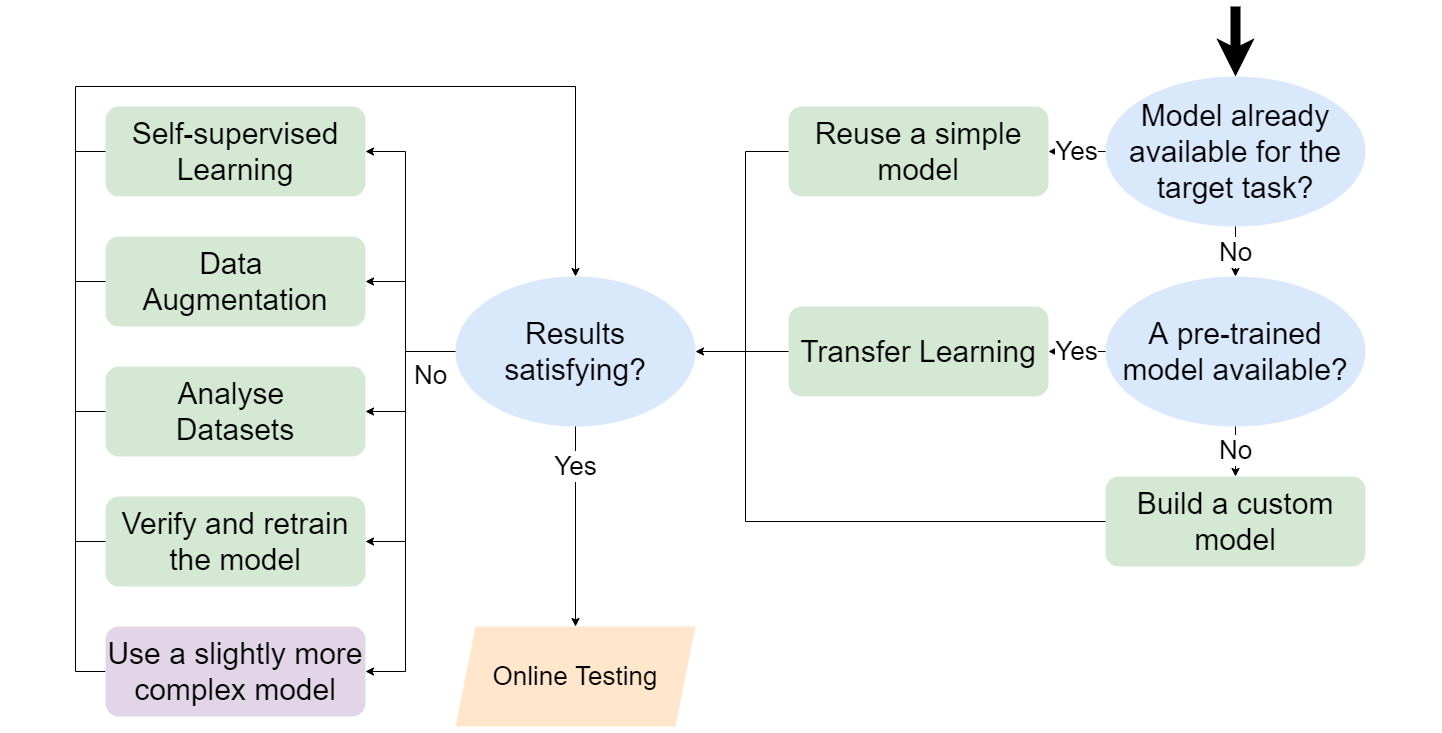

Once the model evaluation metrics are defined, we can now process our training stage (Figure 5). The stage is designed with respect to Ockham’s Razor – we increase the model’s complexity and the quantity of data only if necessary. For a defined target task, we first check if an existing model can solve the problem without additional training. If it is not the case, we try to leverage a pre-trained model trained on a similar task and fine-tune the model parameters with minimum training effort. We build a customized model and train it from scratch only for

- Not already existing pre-trained models

- Novel problems

- New types of datasets that no one has explored before

The model is evaluated and chosen based on performance and energy consumption. We apply Bagging strategies and statistical tests on small but well-designed datasets to get a precise estimation of the model performance. By keeping only model improvements that are statistically significant, the model is designed to be light and efficient. Besides, to mitigate the redundant training-evaluation iteration, we use tools like DVC to keep track of experiments conducted in the past and their associated results. It can avoid running twice the same experiment, which would be a waste of energy. Combining with the aforementioned model pruning and parameter weight reduction techniques, we can achieve a well-performing AI model with less energy consumption.

Figure 5. Training stage

Here is some advice to move from Red AI to Green AI:

- The accuracy of the predictive model should not be the only objective function to be optimized. The environmental impact of the model must be considered during the learning and prediction phases. Roughly speaking, the model performance should be equal to the useful work done divided by energy used, CO2 emissions and other environmental impacts.

- It’s not always true that larger models work better than small models. You can reduce the size of the model. It has been proven that pruned models could achieve the same performance as the original models.

- Replace for example variables in float64 by float32 … or even integers. It is probably the simplest and most effective way to reduce energy consumption.

- Always try to reuse existing models by starting to learn from pre-trained models.

- It is often better to have less data but of good quality than to have a lot of bad data. Big data is not the same as having a lot of information. With good data quality, you can learn faster and consume less energy.

- It is also possible to have a positive impact by increasing the hardware performance used for machine learning tasks.

- It is encouraged to use Cloud when applying AI models [5].

- Use A-B tests to gradually put a model into production for strategic model deployment.

- Use machine learning experiment management tools like DVC for tracking and reproducibility.

References

[1] Net Zero by 2050 – Analysis - IEA

[2] Katyanna Quach. AI me to the Moon… Carbon footprint for ’training GPT-3’ same as driving to our natural satellite and back, 2020. Blog post. https://www.theregister.com/2020/11/04/gpt3_carbon_footprint_estimate/

[3] Dario Amodei and Danny Hernandez. AI and compute, 2018. Blog post. https://openai.com/blog/ai-and-compute/

[4] Ben Dickson. The GPT-3 economy. 2020. Blog post. https://bdtechtalks.com/2020/09/21/gpt-3-economy-business-model/3

[5] Tammy Xu. 2022. These simple changes can make AI research much more energy efficient | MIT Technology Review. https://www.technologyreview.com/2022/07/06/1055458/ai-research-emissions-energy-efficient/

[6] Masanet, Eric, Arman Shehabi, Nuoa Lei, Sarah Smith, And Jonathan Koomey. “Recalibrating Global Data Center Energy-Use Estimates.” Science 367, No. 6481 (2020): 984-986.