The shift in web front-end tooling in the 2020's

February 2022 seems like a good moment to discuss about the recent trends happening in the web frontend tooling field. Indeed, we are seeing a large part of the frontend community dropping some major tools that were both important and dominant in the past 5 years, such as Babel and Webpack, in favor of substitute newcomers. So what changed ? Let’s dig in.

The hunt for performance

Developer eXperience (DX) is the obvious main selling point for developer tooling. At the end of 2015, the main complaint from the developers boiled down to what is called “JavaScript fatigue” : too many tools and too much configuration. A new wave of additional boilerplate and generators came in supposedly to deal with that mess: Yeoman, Gulp, Grunt… but truth is it just added another layer on the JS Fatigue.

This problem has been solved by moving this stack of tools on the framework side. Create-react-app, Angular CLI and Vue CLI all came in with preconfigured Webpack and plugins for local dev and production build. All these tools were configured with consistent and conventional defaults adopted over the years by the JS community. Then, the frameworks scaffolding tools no longer exposed the configuration and proposed optional overloading instead, drastically reducing the amount of configuration found in web projects root folder.

So what else could be done to further improve the DX ? Turns out the last remaining problem is the performance. Although this was not a critical issue and the JS developer tooling was already much faster than other tech stacks (cough Java cough), there was room for improvement. On large, complex projects, the hot reload times could take as long as 30 seconds. Unbearable when the initial reload times took less than a second at the beginning of the project ! Thus began the hunt for performance.

JS tooling is no longer written in JS

In 2007 came this famous quote from Jeff Atwood: “Any application that can be written in JavaScript, will eventually be written in JavaScript”. And this has been very, very true for frontend tooling. When working on tools to improve their daily work experience, web developers pick the language they were already familiar with: JavaScript. Who would have guessed ?

To be fair, this choice made sense in many ways. It’s easier to work on code transform and code analysis tools when the language you are working on is the same that the language you are working with. It enables to reuse the existing NPM ecosystem for both application and development dependencies (leading to devDependencies entry in your package.json). And it keeps the door open for the whole JS community (most popular language in the world, shall we remind), so that they can invest their time and creativity into contributing on open source projects that they actually use on a daily basis.

Yet, the tooling has become more and more complex, and the move of build and devtools on framework side led to a professionalization of front-end tooling. The new JS rockstars appear to be people working on JS tooling, and no longer those working on JS projects. And this new generation of experts no longer see JavaScript as a familiar helping hand, but as a poor technical choice for their performance goals.

A perfect example of this trend has been the esbuild project, which has stepped up as the Webpack killer. This new bundler is written in the Go language by a single person, Evan Wallace , a big brain from San Francisco Bay a.k.a. the Silicon Valley. It aims to be an extremely fast JavaScript bundler, bringing a new era of build tool performance. Webpack, on the other hand, has been created with completely different objectives in mind: a large ecosystem of plugins, single-purposed code transforms written in JS/TS, maintained by a large group of people from different places of the world. By the way, Babel has been made in a similar fashion, and we’ll see below why it followed the same path.

So 2020’s will mark the rise of compile-to-native languages for front-end tooling, with languages like Rust, Go and Dart. The entry barrier for JS developers to contribute on their tooling stack will be much higher, if not completely out of reach. But in return, they will have extremely performant and normalized tools. Time will tell if this was a good trade. In the mean time, expect the front-end tooling to look more and more like a all-in-one black box designed by leading experts. The closest thing we currently have for this black box project is the Rome tools

, a set of tools written in Rust including a linter, a compiler, a bundler and more. They aim to replace Babel, ESLint, webpack, Prettier, Jest, and others, just that !

ES Modules to the rescue

ES Modules have quickly gained popularity in the JS community which struggled with CJS, AMD and UMD frankeinstein module patterns for years. However, it took at least 5 years to be broadly adopted by Node.js and inside web browsers, due to the complexity of the transition while preserving backwards compatibility.

But here we are, with ESM support on all the platforms, starting to unplug our bundlers for local development since they are natively supported in both our web browser and our test suite in Node.js. Browsers do a great job at resolving the module tree in very little time. Unbundled code is certainly larger, but this is not a concern when loaded from the local disk of the developer machine. And the development servers quickly evolve to take advantage of ESM, instead on relying on automatic chunking features like we used to have with Webpack.

Vite is a good example of such an ESM-based dev server. It is built on the idea that the way we split our application code in modules would also be the perfect split points for hot reloading. This makes sense when considering that all modern JS frameworks follow the component approach, with our webpages being built with small bricks, each associated to a file. Change the file, reload the brick, without losing context. Tracking the dependencies for hot reloading has never been so easy, and so fast, with the static imports of ES Modules. This led to blazing fast startup and reloading times for modern dev servers, which sounds appropriate when you decide to call your project “Vite”.

This does not mean that we are getting rid of the bundlers completely. Loading a chain of ten nested module dependencies in your browser is still much slower than loading a single bundle. And Webpack has improved its chunking strategy for more than 10 years now. UX is still much more valuable than DX and we surely tolerate a few additional minutes of build time if it saves a few seconds of loading time for each of our lovely users. So bundlers are still there ! They will just move to your build process and CI logs alongside test suites, code quality checks and audit tools which occasionnally send you a few warnings in the face.

That means the toolchain for local dev vs bundling for prod are now very different, with dedicated tools and different objectives. Dev tools aim for super-fast startups and reloads so that you can focus on code, like a rally co-driver. Build tools aim for super-optimized bundling, chunking and minifying rocket science, with a hint of code transforms required to make it work on the worst browsers your users will dare to show up with.

Code quality tooling get out of the build chain

Bundling, chunking, minifying, what are we missing ? Our good old linter friend of course ! How are we going to live without our daily dose of Unnecessary trailing comma warnings ? Yet, on modern front-end tool stacks, these pleasant reminders have disappeared from our build logs. Where did they go ?

Linters in the editor

A quick answer comes with the editors, which integrates much better with the popular linters like ESLint or Prettier. In early 2010’s, JS code analysis was almost exclusively done by dedicated tools built into paid JS editors, behind close doors. But developers were frustrated by the price barrier and the fact that these tools were not open source. That’s why they accepted to take the linter out of their editor and use external JS validators and syntax checkers.

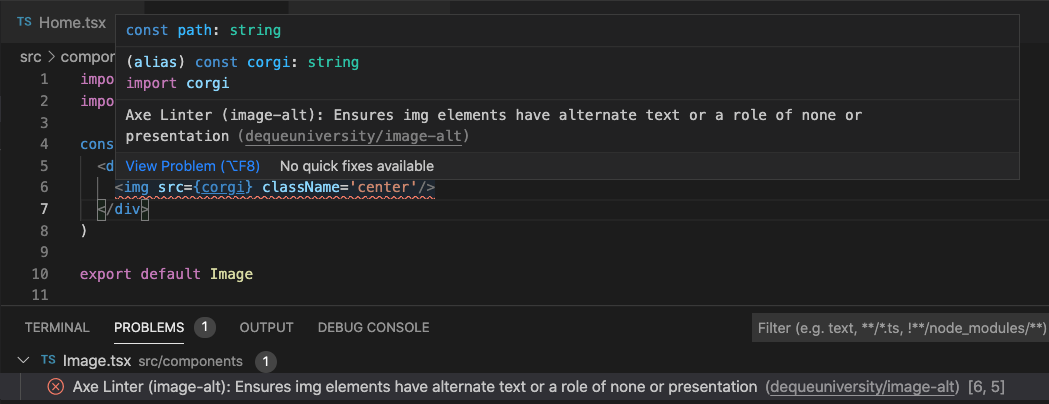

These tools have matured with time and reduced to a few leading projects like ESLint for linting and Prettier for code formatting. We reached the point where it makes sense for editors to bring built-in support for these tools. Visual Studio Code, to name one, became the most popular free code editor in the front-end community and has excellent support for ESLint and Prettier thanks to its plugin system. The formatters are applied automatically at file save or at every character input. The linters and syntax checkers are run in the background and warning and error messages are displayed directly over your code, in pretty convenient tooltips.

Considering that most developers now have this base level of linting/formatting built in their editor, it no longer makes sense for frameworks to keep them in the local development build step. The idea is to not have to wait for Control+S and server reload to notice the errors, if these errors can be displayed directly in the IDE. It also reduces compile & reload time for local dev experience, helping a lot for the performance hunt we mentionned before.

Another interesting evolution of editors is the built-in support for TypeScript and static type-checking in the background. This led to very opinionated choices on dev tooling, such as esbuild that compiles TypeScript code into JS without even checking the types ! Just doing the bare minimum work of removing the type annotations. Why should they bother to run a type-check step if it is already done sooner and better by the editor ?

So the new trend is to remove code quality tools from development dependencies, and rely on editor support instead. Linting and type-checking are still done in CI for production bundles, to prevent any prankster dev working with vim or notepad to push a mistake on production.

Quality Scoring on CI and Userland

Recently, we also saw lot of progress in the field of automatic project scanning and quality scoring. Security checks, performance tests, mobile responsiveness, accessibility audits, many aspects of the quality of a web project can now be deeply analyzed by automatic scanning tools. One of these tools in particular, Lighthouse , gained a lot of popularity since it gathers all kind of audits in a single and efficient tool. Moreover, this tool is built into the Chrome devtools, which come with Webkit and so are also included within Edge, Opera, Brave and all the other Webkit-based browsers. Lighthouse can also run as a standalone program on all platforms, or directly in your CI with Lighthouse CI.

Not only these audit tools are very useful, but they can no longer be ignored. Since every user on the Internet can run these audits on your website with a few clicks, using websites like web.dev/measure , the major downsides of your applications are now publicly exposed, and can give you a bad press in the tech community.

On another spectrum, systemic package analysis is now the norm. You get a list of vulnerabilities found in your dependencies at every npm install, and a dependabot opens hundreds of pull requests on your Github projects to fix security issues by upgrading a dependency version. Security analysis is everywhere and no longer an opt-in, nice-to-have feature in your dev toolkit. Again, these audits cannot be ignored because they are publicly exposed for every public package. Have a look at snyk.io

or npms.io

to see the score of your favorite NPM package.

JS dev community also came together in a common effort to reduce the amount of dependencies

in common tools and libraries on NPM. Mostly because they can no longer bear with the node_modules is a blackhole meme, but also to reduce the risks and burden associated with too many dependencies. Therefore, you can expect the node_modules folder for a fresh new web framework project to be much smaller than it used to be in 2015. This is, without a doubt, very good news.

In short, all the quality analysis tools have taken off and are no longer part of the build chain of your web project. Now, they are part of the platforms, the browsers and the code editors.

The new status quo of “modern JS”

ECMAScript 6, renamed ES2015, was a turning point for JavaScript and its tooling. Because this new major update for the JavaScript specification was very appealing for developers, a large part of community accepted the idea of adding a compilation/transpilation step just to be able to use “modern JS” in their projects. Therefore, ES6 played a crucial role in the transition from the jQuery era to the transpilers era, which paved the way for smarter code transforms and new languages built over JS like TypeScript.

TypeScript has won, in an interesting way

At present, the success of TypeScript is no longer deniable. The static typing layer built over JavaScript as new language by Microsoft is now supported and praised by all major web frameworks. Deno, the spiritual successor of Node.js, also has built-in support for running TypeScript code.

Its success was not guaranteed though. It had competitors like Facebook Flow , or initial versions of Google AtScript promised for Angular 2.0 before their team decide to work together with TypeScript team. There is also a long list of languages with static typing that compile to JavaScript: Dart , Elm , PureScript , rescript . The sustained efforts from Microsoft, both in language development, marketing and language tooling, paid in the long term.

But TypeScript also won in a curious, interesting way. TypeScript has a feature called definition files, which are *.d.ts files that contain type definitions that can be applied on existing, vanilla JavaScript source code. These have been used to provide static type information for existing popular JavaScript libraries. A central repository called DefinitelyTyped

aims to centralize the effort of providing such definition files for all the popular libraries. Meaning you can retrieve the type definitions for the “Foobar” library by npm installing @types/foobar. This project was so successful that editors started to automatically check the presence of definition files for each package installed, and provide type-checking and autocompletion for every project, be it written in TypeScript or vanilla JavaScript. You read that correctly: you benefit from TypeScript even if you are not writing TypeScript code ! This is a huge win for the whole community.

Since the birth of TypeScript, JavaScript has catched up with many features of TypeScript: modules, classes, iterators, private modifiers… TypeScript is now mainly used just for its type annotations, which makes the job of the compiler much easier. We also saw that modern dev servers do not even bother to run a type-check step on compilation, leaving this job to the editor. So the official TypeScript compiler tsc is surprisingly rarely used. We can conclude that TypeScript shines more as a language than as a toolkit.

Transpilers are less crucial

We are living the dream in an evergreen browser world. With IE dead for good in 2022 , we live in an era where browsers are automatically updated and, in general, very good at CSS/JS support. For both mobile & desktop.

Safari is the new lowest target in terms of browser support, but Apple receives a lot of pressure recently from web dev community to try to change that.

This general broad support for a modern, post-ES6 JS reduce the importance of the role of transpilers like Babel (historically named 6to5). The new additions to JS after ES2018 have also been mainly ignored by developers, not because they are bad, but because they are much less significant than what ES2015 brought to the table. That led to a decline in popularity for Babel, especially for local dev usage. We still expect transpilers to be part of the production build toolchain, in a targeted compilation setup with Babel-preset-env. Same for CSS transpiling wiht PostCSS-preset-env. Developers are also less likely to use proposal, pre-stage 4 language features, except for niche use cases.

Smart compilers and framework magic

Since frameworks now embed the build tooling and their preconfiguration as we explained in the introduction, it is not a surprise that they started to implement specific code transforms in their compilers for the specific needs of the framework. Angular has its own compiler ngc that compile specific decorators for Angular and component templates. Vue also has its own code transforms for dealing with its single page component format. Vue also recently proposed more compilation magic in this RFC

to work around some limitations of JavaScript about reactivity on variable reassignment. And React promotes the use of JSX, an extension of JavaScript to be used instead of custom template syntax, which is still being debated in the JS community.

We also need to mention the latest outsider in the JS framework landscape, Svelte by Rich Harris. Svelte is a new generation of framework based exclusively around its compiler: its promise is to have a zero runtime footprint after build. The compiler sets up everything needed for your component architecture to work properly, leaving no trace of the framework after build (although the bundled code is recognizable and the savings in size are less and less significant as the application grows). Svelte also uses some strange syntax to add its reactivity, by reappropiating an old feature of the language: JS labels

As you can see, modern JS tends to have some “framework flavours” today, which is not good for the cohesion of the JS landscape. JavaScript is also more and more used as a compilation target, for example with Flutter Web (Dart) or Kotlin Multiplatform Apps. We expect the ES-2018, post-ESM JavaScript to be a foundation layer for a compilation target for the Web. Some thought this role would be taken by WebAssembly, but WebASM is progressing very slowly and is expected to really shine only for a small niche of applications.

In conclusion, modern JS no longer shines as a language, but as an universal compilation target. Most of the interest of the web dev community is now targeted at proprietary languages or compiler hints. It does not mean that JS is no longer popular or attractive, on the contrary it’s more popular than ever. But it looks like there is less room for progress on the language side, and the new interesting stuff is built on top of it.

Conclusion

This sums up the shift in front-end tooling we are witnessing these years. From the user’s point of view, the maturity of the tooling is supposed to induce better and faster websites, with less bugs and state-of-the-art features. But from the developer’s point of view, his working tools are now very complex, coded in a language he does not master, and integrated into a stack to which he has less and less access. We are a long way from the days when anyone could create their site by opening a text file and scribbling a bit of HTML.