Kafka manager By Worldline

Apache Kafka is an open-source distributed event streaming platform used by thousands of companies for high-performance data pipelines, streaming analytics, data integration, and mission-critical applications.

Kafka is one of the most famous message brokers used in many IT projects throughout the world. Thanks to its high resiliency, scalability and reliability, it can be adapted to a lot of business use cases based on several kinds of architecture. These are some of the reasons why, as the other IT companies, Worldline has chosen to use Kafka in production.

In this article, we will discuss issues we encountered, solutions we found and that we shared with the community.

Context

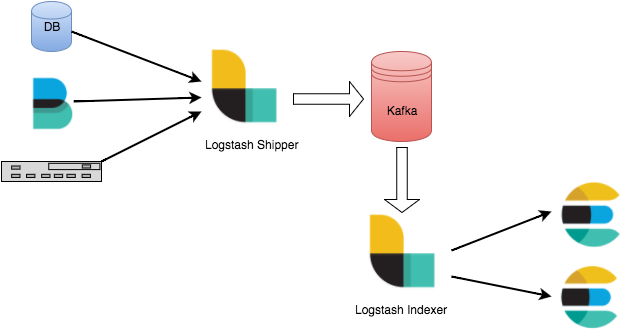

In Kafka, topics allow us to create asynchronous processes or to set up a buffer for other components (see below a use case with ELK). Many use cases are possible and enable us to answer multiple functional or technical needs.

Kafka usage example in ELK

The last few years, Worldline implemented Kafka in several projects. The architecture of some applications is now entirely based on Kafka, both for the Java Message Service (JMS) part, and for the usage of Kafka Connect.

However, even if many teams have chosen this technology, it is difficult to implement or understand it. Indeed, managing the cluster depending on the needs of the project requires a high learning curve. Although some information relating to the cluster is available via APIs, CLI commands or even JMX are not easy to use. In addition, these APIs do not allow simple monitoring of the cluster and of its activity (which mainly depends on the functional context). As a consequence, Kafka can be represented as a black box for developers who never had the opportunity to train. Nowadays, people favor website UI to manage their systems.

This is how we started our study in order to find a tool that could make it easier to understand Kafka, and especially to be able to monitor its behaviour in production. As Worldline offers the opportunity to study innovative or technical solutions to their employees, we used this time to implement Kafka Manager.

Market study

The first thing we thought was: “It’s unlikely that we are the only ones to encounter this problem and to ask these questions”. We started a market study to find out the existing tools used by Kafka users.

Internal

Worldline is a large company which has many experts. In our own company, we met different architects who had already deployed this technology. It turned out that many of them had used several available open source solutions on the market without being truly satisfied.

Indeed, a solution was used for the basic functionality of Kafka (information on topics, consumers…), another to manage Kafka connect, and yet another to manage logs… All these tools had to be kept in production in order to be efficient and they depended on the version of Kafka being used. The more tools you deploy, the higher the maintenance cost.

In addition, these tools did not offer any API to integrate them to a monitoring solution, and it was impossible for us to link our Kafka cluster with the Worldline monitoring solution.

External

Our internal investigations did not bear fruit, so we turned to the solutions available on the market:

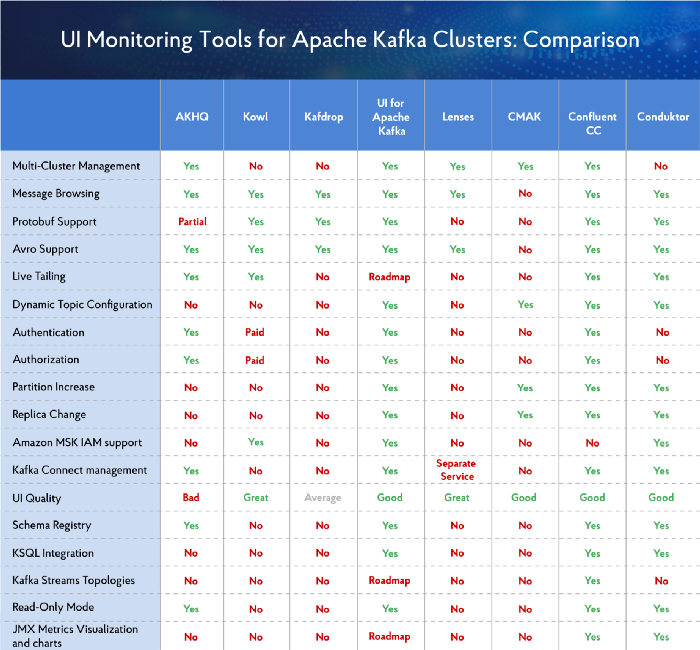

Overview of UI Tools for Monitoring and Management of Apache Kafka Clusters

This study shows that few of these tools combine all the functionalities we were looking for. In addition, some of the tools we tested could not be directly exploited in a company because they presented a high risk for the CISO of that company. In fact, as Worldline is a company which provides many payment projects, we needed a tool compliance to PCI rules. Finally, the remaining tools were paid licenses, thus could hardly be used in production on most of our projects.

Based on this study, we decided to produce our own tool named Kafka Manager based on our needs, taking inspiration from existing tools (their presentation, their formatting…).

Our project

The goal of our project was simple: we wanted to provide a complete open source solution to manage and monitor a Kafka cluster. We started to define the objectives of our MVP with the following needs in mind:

- A graphic display of relevant information from Kafka allowing a visual understanding of the data (Graph / Color / interpretation of certain data…)

- The ability to view the configuration in place and to modify it where possible

- A display of data or messages contained in Kafka topics which can be rewritten

- A simple metrics solution by REST API to get data on Kafka such as the number of messages produced, the number of messages consumed, the lag and many others features giving an overview on what is happening in our kafka cluster

- A simple monitoring solution by REST API to track the behavior of Kafka such as the activity or non-activity of a topic or its lag, for example

Regarding the technologies, we used the ones we mastered the best:

- A backend in Java Spring Boot

- A frontend with Angular via the AdminLTE template

- A final Docker image to deliver our application

Information

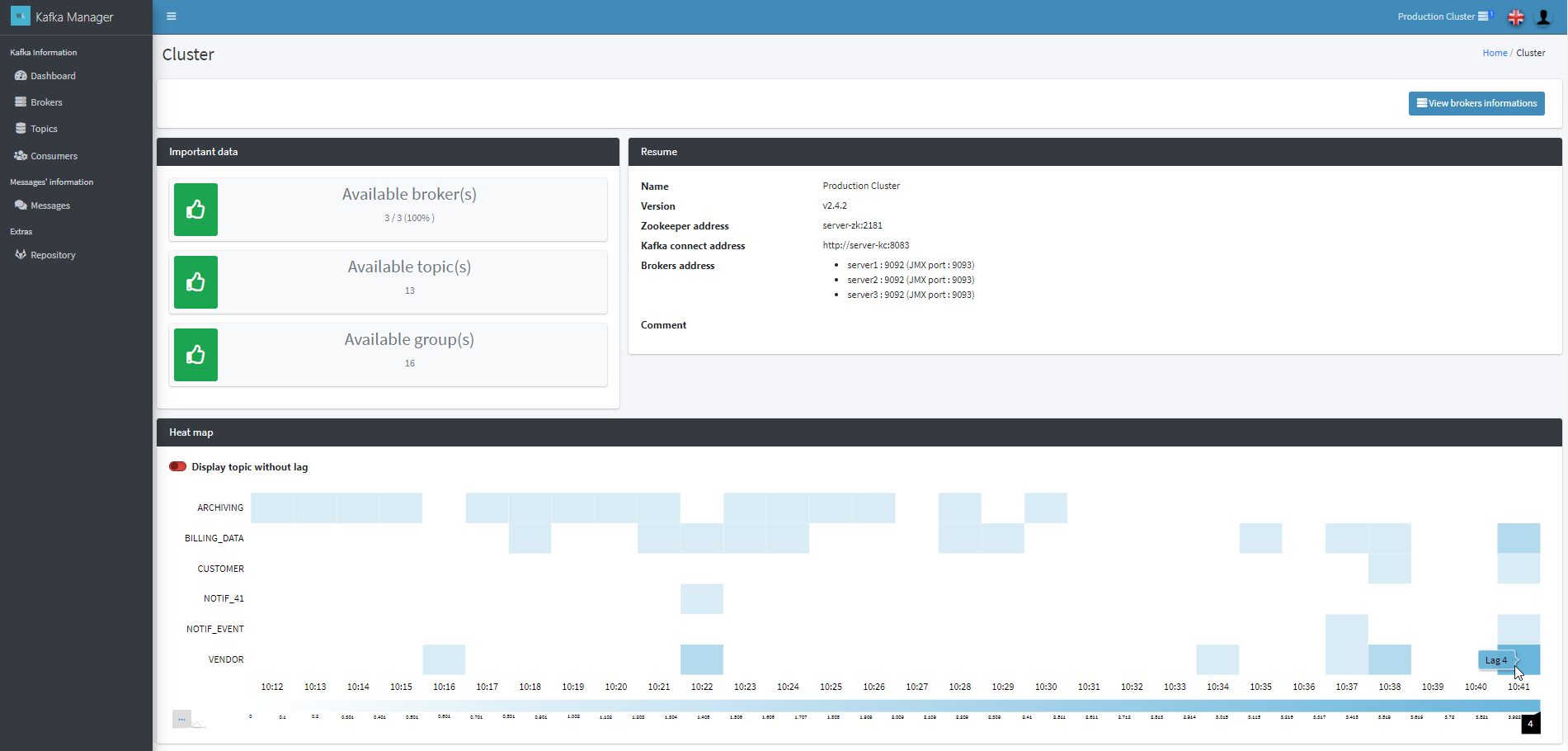

First, we started with an approach used in many existing tools, offering a visualization of the different levels of Kafka architecture:

- the cluster (the elements bringing together the entire Kafka stack)

- the broker (the elements making up the cluster)

- the topics / partitions

- consumer groups

This UX is the central point of our tool in which we have added various functionalities. We wanted anyone to be able to easily view information about the cluster. However, the developers remain in charge of interpreting the information according to their functional or technical needs.

Kafka Manager - Dashboard

Authentication

Kafka Manager presents a number of sensitive features for a Kafka cluster:

- Delete a topic

- Delete a consumer group

- Reset offsets

It is best if not all the users of the tool can access the administration of your cluster. Kafka Manager therefore comes with 2 types of authentication.

Simple

Simple authentication allows the creation of 2 users: SIMPLE and ADMIN. Authentication occurs via a static login / password defined when setting up the tool.

You can provide the type of account for the connection according to the user’s profile.

OpenID Connect

An OpenID Connect connection is available. The rights are allocated via the roles available in the token and can be defined during the set up of the tool. In addition, an administrator list can be explicitly defined through a login list.

Settings

As we encountered this problem several times in production, we wanted to be able to easily update the topic settings or brokers without going through the CLI broker, or at least to use it as less as possible. Thus, we have set up a presentation form based on all available parameters offered by Kafka which contains the default value and the current value. As an example, the configuration of topics can be handled as the creation of topics or their modification by adding a partition. Moreover, a short description of each setting is available.

Kafka log

One of the important functionalities of our application is the visualization of log (Kafka message). Indeed, during a production run, a message can sometimes be blocked for different reasons: bad formatting, incorrect consumer code… This state blocks the unstacking of the partition and our messages are no longer processed. In order to overcome this, the notion of an error queue (Dead Letter Queue) can be set up, where the error messages will be stored after several retry from consumers.

This functionality will allow the user to view the stuck message in the current queue or in the error queue in order to understand the problem. To quickly unblock the situation, they can also move the current offset of the consumer group in order to ignore the problematic message and continue the unstacking of the topic.

Kafka Manager is indeed able to create a new consumer group with a unique ID to read the messages in a topic. To do this, it is possible to start reading the messages from the beginning of the topic or to position the consuming process at the same offset as another group of consumers. This ID can be shared between users to share the offset and the state of the message.

Once the message has been read and the error detected, it is also possible to edit the message in order to re-inject it into the queue for future processing.

Metrics & Monitoring

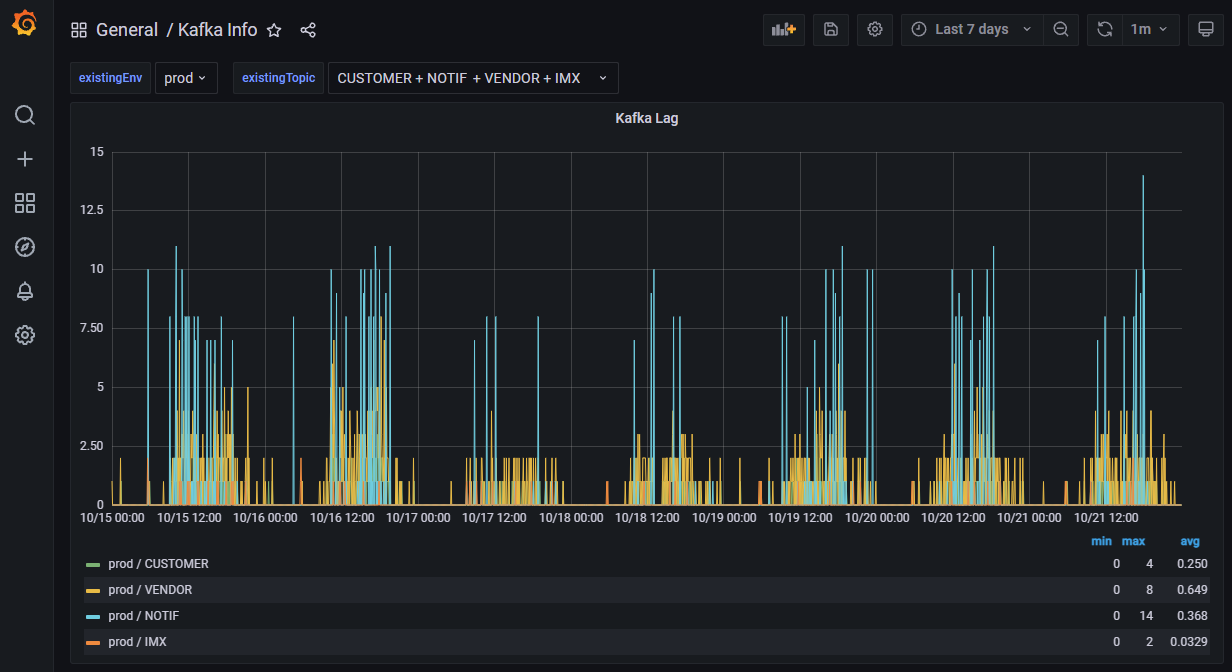

Kafka Manager provides a set of REST API in order to get application metrics or to monitor the cluster. Based on our internal study, we realized that the different teams using Kafka had different functional needs and therefore different monitoring processes. For instance, it is usual for some teams to have a constant low lag on a topic which would be a problem for others. For some projects, lag spikes can be a major problem that need to be detected, while this behavior might be expected at least once a day for others. Keeping this in mind, we decided to let the cluster manager set up the suitable monitoring process with the tools that fit. Kafka Manager provides the APIs giving information and feedback which can be used to detect issues in your cluster, both from a technical and functional point of view.

Thus, we are able to retrieve the lag of a topic for a group of consumers, detect the activity and the non-activity on a topic in order to raise alerts. We can get information such as the lag of a topic on a consumer, the number of brokers available and use those data to monitor the activity of your cluster.

Kafka Manager offers a configurable ElasticSearch connector to export all the metrics in your ElasticSearch allowing you to track your activity over time. You can also simply call the REST APIs at regular intervals in order to store by yourself, in the database of your choice, the information relating to your cluster.

Monitoring example with Grafana

Kafka connect

A Kafka connect module is present to manage connectors, workers and associated tasks.

It also allows you to visualize the possible errors during deployments or task running.

Timeline

In Kafka Manager, each sensitive action of a user can be recorded in order to create a timeline. As we work in PCI context, it was mandatory to track who updated the settings and when.

If you implement the open connect ID authentication, you will find user logs for each action performed.

Feedbacks

The Kafka Manager project has generated a lot of enthusiasm within our company but also among external users of Kafka. The tool is in production on several projects, allowing us to reliably monitor our platforms. We received a lot of user feedback, thanks to that we corrected some bugs and added some functionalities to the application.

Contributions

Currently, the tool is available in open source on Github .

A Docker image is also present on DockerHub allowing quick deployment. This image contains an openJDK for Java application running and an nginx server.

We hope you will test Kafka Manager as soon as possible on your cluster, and we look forward to receiving feedback. All comments are welcome, be them positive or negative. Let us know what you think!

As Kafka Manager is a tool open to the community, do not hesitate to contribute to the project :)