DilBert, a Worldline Labs' language model for Question Answering

Chatbots and Question Answering

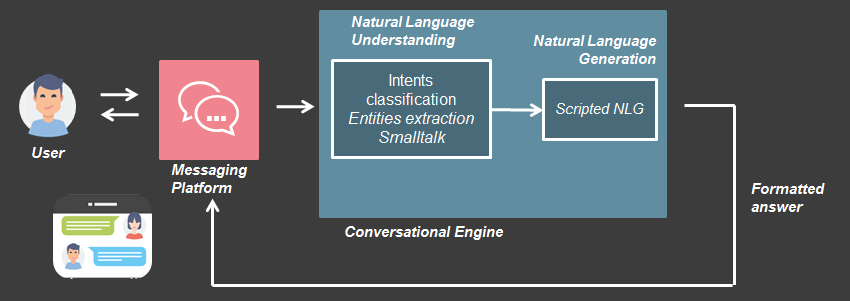

Today, chatbots solutions (like Rasa for example) mostly focus on two functionalities: intent classification and entity extraction.

Basically if you ask a question like “Where is the office of Mr. Jones” to a properly trained typical bot, it will understand your intent (“find office location”) and extract the entities ({“person”:“Mr. Jones”}) as long as these intents/entities were specified by the developpers. Then it will conditionnaly execute a task and provide a scripted answer.

This behavior can be used to implement very useful chatbots for customer support, booking assistance, etc. A common application for companies is to have a bot as an additionnal channel for their customers to access general information from their website, documentation or FAQ. But having to manually specify all the corresponding intents can be time-consumming, and it is often difficult to anticipate all the possible questions. This is where we can do better with something called Open Domain Question Answering (ODQA).

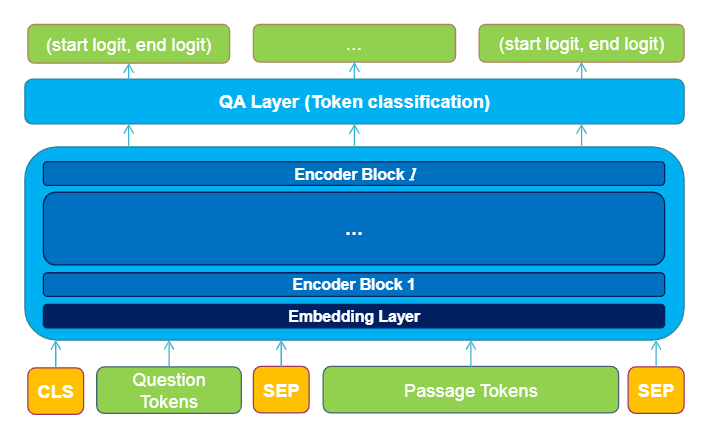

The objective of ODQA is to find relevant answers to a question in large sets of unstructured documents. ODQA engines have two major components: an ad-hoc passage retrieval module that select relevant paragraphs/documents for the question at hand, and an extractive Question Answering (eQA) module that identifies the precise answer in the selected paragraphs. One can use this as a standalone system. One just has to provide a set of documents, web pages, and the bot will have everything it needs to get started. But one can also combine this with intent classification in a unified system (for instance, using the ODQA engine only no specified intent corresponding to the question).

But wait… how does it work ?

For the information retrieval part, just think “search engines”. Information retrieval is basically the same problem and has similar solutions. For instance, the most commonly used approach (BM25 , which is now the default in Elasticsearch) consists in:

- Computing words frequencies in each document and in the overall corpus.

- Use the words in the question to compute its similarity with all documents.

- Rank the documents according to the similarity.

Usually, documents are preindexed with their words in order to accelerate the search with the question at hand.

The extractive Question Answering part is more complex and good solutions only appeared in recent years. The current state-of-the-art are Transformer-based models like Bert , Google’s natural language processing model. Bert is known for its outstanding predictive performance but it is computationally expensive. In particular it would not be able to search in hundreds of paragraphs in real-time.

To solve this issue, we proposed to modify the Transformer architecture with a novel mechanism called “Delaying Interaction Layers” (DIL). Our obtained variant called DilBert, which entails a 10-fold speedup and a competitive predictive performance, was nominated for the best academic paper award at the conference EGC21. Here’s how it works…

The Transformer ? Bert ?

Three years ago, this picture below is what people meant when they said “Transformer”.

|

|

| What a Transformer used to be |

|

| What a Transformer used to be |

Today, in the natural language processing community, Transformer has a totally different meaning. It is a deep learning model, introduced in the paper “Attention is all you need” which has two parts: Encoder and Decoder. The role of the Encoder part is to take a text, as a sequence of elements (let’s say a sequence of words to simplify), and turn it into a sequence of contextualized vectors (more into that later…). The role of the Decoder is to take such vectors, also called embeddings, and generate a new sequence of words. We can train an Encoder-Decoder to do plenty of things. For instance, we can perform automatic translation: we put a sentence in French in the Encoder, take its output and use it in the Decoder to predict the equivalent sentence in English. But you can also use the Encoder part and the Decoder part independently, for other purposes.

The Decoder part alone was successfully used by OpenAI to build the famous GPT-2 which then evolved to GPT-3, a conditional and unconditional text generator which has wowed the internet many times by generating realistic lyrics , poems , Donald Trump tweets , reddit threads , and so on.

And the Encoder part alone was successfully transformed* by Google into Bert, not the Sesame Street sensation, but the famous language model that outperforms humans in several natural language processing tasks.

*we are talking about a Transformer after all.

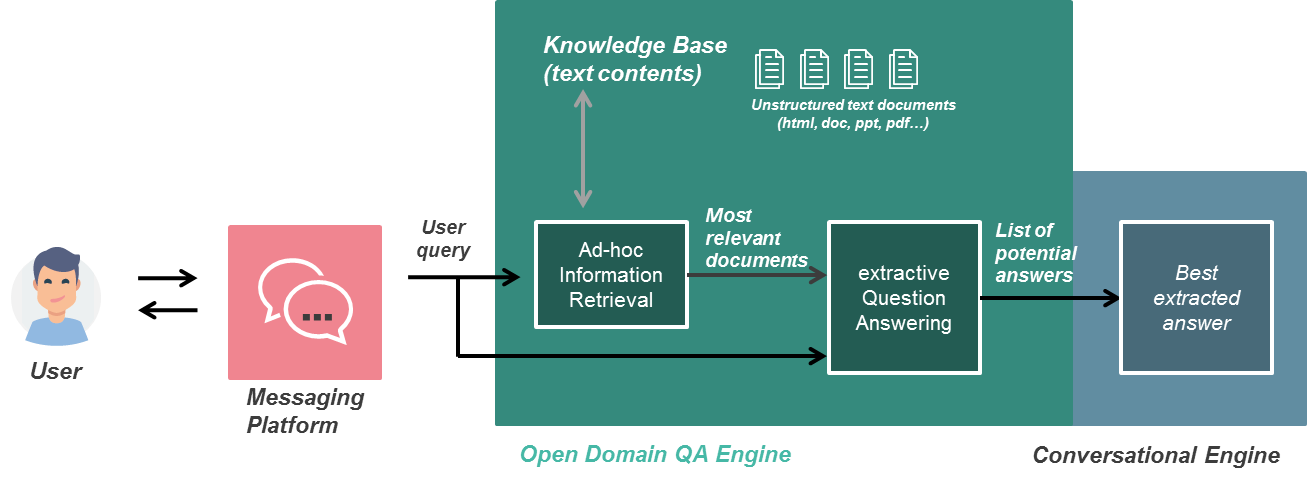

In our case, we use Bert to perform extractive Question Answering. Given a question and a paragraph, the goal is to locate the answer, if it exists, in the paragraph. Here is an overview of Bert’s architecture when it is used for this task:

|

|

| Architecture of the Transformer Encoder when used for Question Answering |

|

| Architecture of the Transformer Encoder when used for Question Answering |

Bert takes as input a text sequence that is the concatenation of the question and the paragraph. The embedding layer converts each element (word or token) to a first generic vector representation. Then, the l encoder blocks contextualize each embedding with respect to the other ones in the sequence using a mechanism called self-attention (more on that here ). And this is how, from a sequence of word, we obtain the sequence of contextualized embeddings that I was talking about earlier.

For Question Answering, this contextualization is useful because, at the end of the Encoder, each token of the paragraph has been contextualized by the question. Then a simple neural network can take each contextualized embedding and try to predict if it belongs to the answer or not.

But this comes at a great cost… because each encoder block performs a large number of computations. The goal of our work was to speed things up. And we did that with a mechanism that we called “Delaying Interaction”.

Delaying Interaction for Efficient Open Domain Question Answering

If 1000 questions are asked and there are 1000 paragraphs to search in, Bert will have to make predictions for all the 1 000 000 question-paragraph pairs… Yet, in a usual setting, the set of documents to search in is rather static. But it is not possible to save computations or to anticipate them because the processing on the paragraphs depends on the question and vice versa.

To solve this issue, we decided to change the architecture. The key idea is to “delay” the interaction between question and paragraph:

|

|

| New architecture of the Transformer with Delayed Interaction|

|

| New architecture of the Transformer with Delayed Interaction|

Basically, the two segments of the input (question and passage) are applied independently on the input Embedding Layer and the first k Encoder blocks (non interaction layers). Then the intermediate representations of the two segments are concatenated and applied together on the last l-k blocks (interaction layers).

This allows a better management of computations:

- For each paragraph: we can pre-compute the first k blocks.

- For each asked question: we compute the first k blocks once (not for each passage).

In the end, instead of having l blocks computed 1 000 000 times, we have k blocks computed 1000 + 1000 times and only l-k blocks computed 1 000 000 times. In the base version of Bert, there is l=12 blocks in total. In our work, we were able to propose a DILBert (Delaying Interaction Layer in Bert) variant with k = 10 non interaction layer which allowed to speed up computations by one order of magnitude.

And surprisingly DilBert benefited from the removed connections acting as regularization and obtained a better generalization power in open domain question answering and hence, a better quality of answers.

Practical interest

Dilbert allows to search for information in large sets of documents with a good accuracy in real-time! To put this in practice, we implemented Dilbert in a chatbot that answers to question based on the entire English Wikipedia (yes, exactly, 40 million paragraphs, that’s right…).

For this experiment, we used the Answerini’s toolkit built on Lucene for ad-hoc paragraph retrieval, then Dilbert as reader (for the extractive question answering part):

|

|

| Our Question Answering pipeline |

|

| Our Question Answering pipeline |

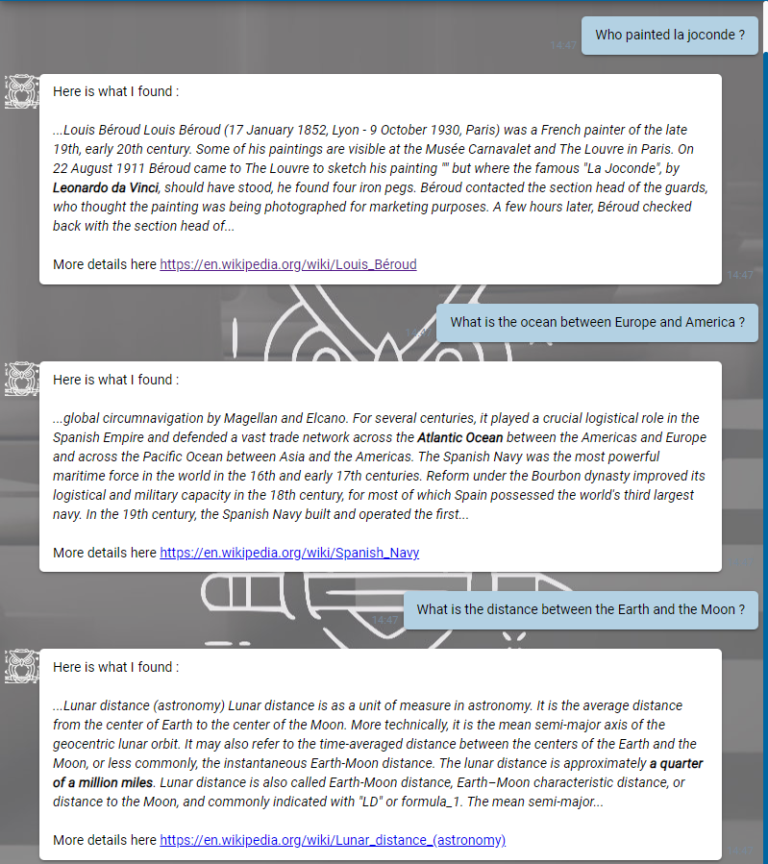

We implemented the ODQA engine as a service using the python Flask library and here is the results in a chatbot:

|

|

| Our newest Wiki chatbot with a Dilbert brain |

|

| Our newest Wiki chatbot with a Dilbert brain |

You can ask any question, and the bot will try to find an answer in Wikipedia. It then replies the paragraph (among the ones preselected by Lucene) in which it found the answer, and in bold the precise answer to the question (extracted by Dilbert). Note that, from the moment the user asks his question, the search in the whole English Wikipedia is done and returned in less than a second.

Additional results

The Delaying Interaction mechanism actually goes beyond Bert, it is generic to Transformer-like models. We applied it to Bert to begin with, but it turns out it can be applied to others Encoders such as Roberta , XLNet , AlBert and so on … In our research paper , we experimented with both Bert and Albert to show this genericity.

Others resources

This work is part of a bigger picture, a Worldline research and development program called SmarterBots, which aims at making chatbots more intelligent, more efficient, more useful. If you want to know more about this, do not hesitate to contact me .

You can also take a look at the video of our presentation at EGC (in French) and the source code of this work.