K8S, Helm & Co: Beyond the Hype

The good, the bad and the hypest

For few years now, Kubernetes and its ecosystem have been becoming the hypest technical platform. A lot of people want to deploy their applications on this platform promoting its scalability features. Others are bashing it criticizing its complexity and the total cost of deployment for such a platform.

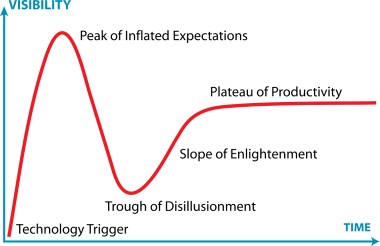

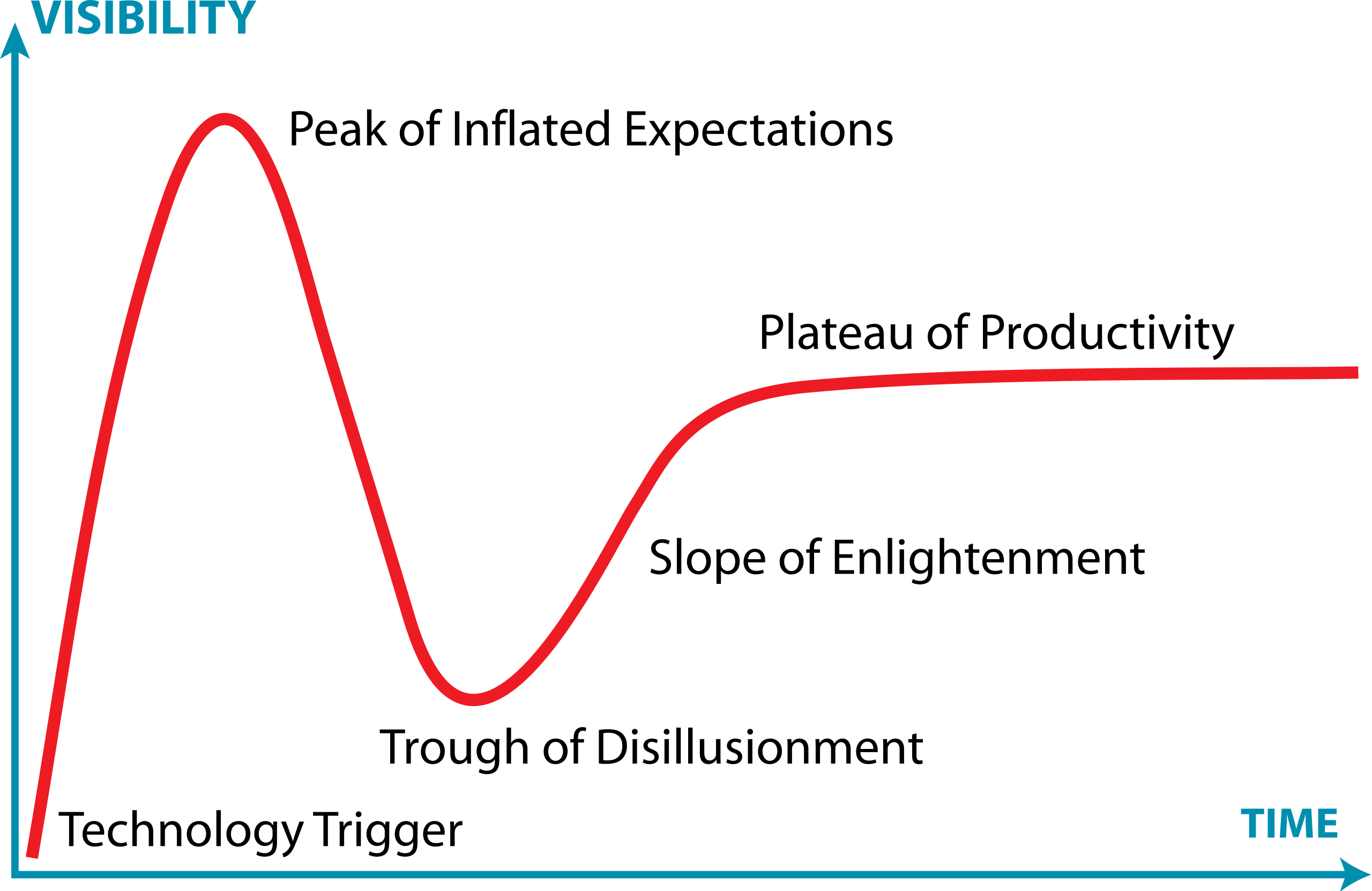

You probably understood that this technology represents a straight continuation of the Hype Cycle and the famous Gartner © adoption cycle .

After having some experience on this platform (and on many others in the past :) ), I will try to weight pros and cons on what I think matters.

Obviously, this article is not a reference or whatever. I will probably omit some functionalities or technical points.

Why do you choose deploying on Kubernetes & why you should not?

Before exposing benefits of cloud technologies, I will try to make the antithesis.

Do you (really) need it?

This topic is wide and really difficult to answer in only one article! This question is then tricky to answer. Especially for the IT crowd which usually follows market trends and new technologies.

Before going to rush into this technology, which is really interesting by the way, it’s really important to reflect on the following questions:

- Are my SLO constraining (eg. 99.95% availability) ?

- What are my application deployment lifecyles ?

- Who manage platforms?

To sum up, you have to know if it worths! If you have an application which need to be scaled dynamically, to endure spikes and be updated without any downtime, OK, Kubernetes fits your needs. However, if you “only” have a simple application without any constraining SLOs , it could be controversial but not prohibitive!

Are you fit for it?

Kubernetes and its ecosystem could be complex to understand. If your company opts for an on premise setup, it would be worse. You should have a dedicated team which will manage it and provide support to development teams.

But make no mistake! If your role is to create and develop business software, it would be really difficult to gather also an additional expertise field on Kubernetes platform administration. You could use it and feel comfortable, but administering such kind of platform could be complicated.

If I could give you one piece of advice, it would be to deploy your applications to Kubernetes only if a support team exists within your company and could help you during the whole project lifecyle: from the design to production deployment.

It’s true if you use public cloud providers such as Google Could or AWS. It’s even more if you use on premise CAAS such as Openshift.

Are your applications cloud native?

Beyond Kubernetes, you should develop coding and design skills.

You should consider the twelve factor methodology from the beginning of your project. You don’t necessarily have to create microservices embedding hypest technologies. It’s possible to create light and stateless modular monoliths . Most of these factors are widely considered as design and coding best practices. Applying them won’t be a big deal

You should then develop skills on container technologies (Docker) and their constraints. If you not used to working with containers (Building, deploying, dealing with a registry,…), it could be better to define a roadmap with intermediate steps.

To sum up, these subjects should be adressed and managed by all the stakeholders which could be developers, project managers, or ops. This technology is a major step forward.

If you don’t feel comfortable with deploying your application to Kubernetes, or if you have to develop skills on these topics, you would wait before moving into it. You can never be blamed for not choosing Kubernetes if you don’t meet all the requirements. On the contrary…

Do you have interactions with tierced services and are they compatible with K8S?

Usually when you stay inside you K8S cluster, everything is fine. As soon as you have interactions with third party services, it can get complicated. Usually, when you design and develop “on premise” applications you have to connect to tierced services which are not cloud oriented such as HSM or file gateways. Some protocols could be incompatible with your Kubernetes setup.

You will have then to check all of the platforms and softwares involved in your design to ensure the compatibility with such a kind of architecture. It could be a big deal. Some help of a support team (see above) will be really usefull.

Why take the step?

Scalability and fault tolerance

From a personal point of view, I was interested first by these key features. If you have ~99.9% availability SLOs, Kubernetes will be valuable in your system design. After days struggling with YAML files , you could deal with automatic scalability. It could be done using either technical indicators (the easiest way) or using business (and technical) using Prometheus . Yes it’s another technology to learn…

Beyond scalability, you will have to deal with observability from the beginning of your project instead of taking care of these topics after going to production. For instance, you will have to configure your Kubernetes deployment specifying how to check if your application is ready (aka. readinessProbe ) to access requests or if is ready (aka livenessProbe ). By this way, these probes will help Kubernetes to automatically scale your application and create a POD if it is necessary (eg. after a crash).

livenessProbe:

failureThreshold: 3

httpGet:

path: /actuator/health/liveness

port: http

scheme: HTTP

initialDelaySeconds: 30

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

ports:

- containerPort: 8080

name: http

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /actuator/health/readiness

port: http

scheme: HTTP

initialDelaySeconds: 30

periodSeconds: 30

successThreshold: 1

timeoutSeconds: 1

I found this practice virtuous. Obviously, you don’t need to deploy your application to Kubernetes to implement observability. However, in this case, it is mandatory and implemented at the outset during the development phase.

Automatic scalability is then very interesting. We often observed that in some cases many production servers were not really used enough. Using Kubernetes, you should only use useful instances for your use case and scale only if it is needed. One of the constraints we could observe to this functionality is that we can not control completely the number of available instances. It is Kubernetes which manages this point taking into account all the settings you have declared in your HELM templates.

apiVersion: autoscaling/v1

kind: HorizontalPodAutoscaler

[...]

spec:

maxReplicas: 4

minReplicas: 1

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: my-deployment

targetCPUUtilizationPercentage: 80

[...]

Deployment

Before deploying (in the real life), you may have to setup a CI/CD pipeline which could orchestrate your deployments on all your environments. Spoiler alert ! It could be a big deal! But, as soon as it will be done, you will see it worths. Your deployments will be really smooth (mostly). OK, we can do that on good old virtual machines. However we can improve it on Kubernetes using zero downtime capability with just a few lines:

strategy:

type: RollingUpdate

rollingUpdate:

maxSurge: 1

maxUnavailable: 0

Infrastructure As Code

When you think about Kubernetes and cloud technologies, we do not think about Infrastructure As Code . However, this practice is in my opinion one of the most useful.

Describing your system into versionned files will enable to test your infrastructure from the beginning of your project. It prevents integration errors (mostly). Updating software is also widely accelerated.

By the way, Terraform and Ansible already enable this practice. Using Kubernetes and cloud technologies, I think that this concept is going further. Fortunately, you can use Terraform provisionning on your cloud platform :).

For instance, if you have to deal with your virtual machines operating systems, you will probably waste your time updating them and fixing the potential errors. Using Infrastructure As Code, you can test and validate your update automatically.

To sum up

You probably understood that this galaxy of technologies is really interesting and could help your for some of your projects. Before using it, be smart. It is not a “silver bullet”. You will probably have to define a roadmap and take some subjects in (eg. which kind of applications is eligible in your context ?) before deploying your applications on either an internal or external cloud platform.