Back to basics - Logging

The log: the disliked, the neglected, the misunderstood and yet probably your best friend.

When we talk about logs, it is quite simple to identify some bad practices or misconceptions:

- Logs are a development/debugging stuff

- Logs are unstructured data… I mean a mess

- Let’s dive into server logs when something goes wrong:

Many considerations are set aside:

- Readability

- Usability

- Concision

- Consistency

- Performance

- …

Let’s improve the situation together.

Before writing our first log line, let’s put the pen down and ask ourselves: Why are we writing logs?

Why?

It’s a strange question, but still relevant because it will drive everything. You may want logs for debugging, functional tracing, etc.

But here at Worldline, our core business is building and operating complex systems for our customers. Consequently, the objective is well defined.

The ultimate place where logs will be written and used will be production.

Therefore this is the primary objective of the logs: to ensure the operational monitoring of your system.

What do we mean by operational monitoring?

- Be able to give information about the system (version, configuration, dependencies, …)

- Be able to know if the system is working well in compliance with the SLO (Service Level Objectives)

- In case of bad behavior, be able to give all the necessary information to identify the problem (Troubleshooting)

As mentioned earlier, you may have different expectations regarding the objective. For example, it is quite common to see logs used for business monitoring. But mixing things up can be a bad idea, especially when the system is complex. About business monitoring, it should be a functional requirement of your system and should deserve specific design and development: dedicated files, events logs, specific DB structure, dedicated reporting… or something I love: well defined metrics in a beautiful Grafana dashboard.

To whom?

Who is the recipient of your logs? Generally it’s not you. It may seem trivial, but it’s still a common mistake. Logs are written for operators and also for machines. That is why we must ensure the readability, comprehensibility and processing ability of our writing.

What?

Logs are what we call an NFR: Non-Functional Requirement. Like any other NFR, it must be given the same degree of attention as for a functional requirement. This means that you must produce specifications, have allocated time and resources, create tests, organize reviews, set up a feedback loop and be in a continuous improvement process.

Let’s take a look at the specifications.

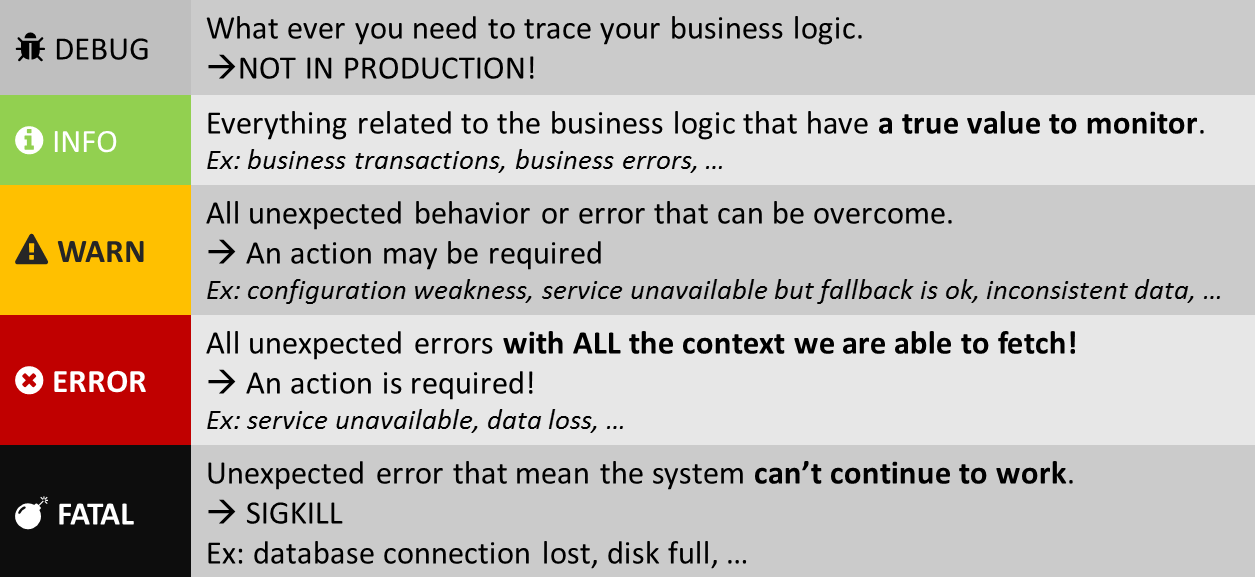

Levels

To be short it’s the importance of the message… but not only that!

It’s crucial, every time you produce a logging statement, to figure out which logging level is appropriate.

For traditional needs, we find the following levels: (You may want more levels to meet special needs… but you shouldn’t. Stay KISS ;)

DEBUG and/or TRACE

It’s very detailed information, intended for development or targeted troubleshooting. You might keep trace messages for a short period of time after deployment on production environment. But treat these log statements as temporary, that should or might be eventually turned-off. The distinction between DEBUG and TRACE is not simple. But if you put logging statement and remove it after the feature has been developed and tested, it should probably be on TRACE level.

By design DEBUG logs can be especially verbose. A good approach to keep readability and to reduce disk usage or I/O is to adopt some conventions:

Example:

- » Entering a method

- « Exiting a method

- – Prefix all logging statements with a method name

TRACE >> execute()

DEBUG -- execute() > Got param: 'myParam'.

TRACE << execute() < Returning: 'myParam'.

Speaking of method tracking, it is quite common to rely on AOP (Aspect-oriented programming) in order not to pollute the code and to add dynamic control.

INFO

It’s something important but non critical to say. We should be able to understand INFO messages and quickly find out what the application is doing.

For example if an application is all about booking train tickets, there should be only one INFO statement per each ticket saying: \[Who\] booked ticket from \[Where\] to \[Where\]

Logs produced by the application at INFO level should only concern non critical messages: important functional informations but also functional errors. Beware: A functional error is not an exploitation error. A functional error (except in cases which are duly justified) does not involve an operational action. Therefore this kind of error should stay at a INFO level.

For instance, the payment is refused because the balance is low is not an error. It’s a functional behavior that lead to a clean error on the UI of the end user but not for the system.

WARN

It concerns any unexpected behaviors or errors that can be overcome. The process can continue, but with some extra caution. A system can tolerate warning messages, but they must always be investigated and justified.

From this level, it will be essential to collect as much information as possible in order to enrich the context of the error and thus facilitate its analysis.

Example: application running in development mode; administration console is not secured with a password

ERROR

Something wrong has happened, and it needs to be investigated immediately. No system can tolerate messages at this level in production. An error level message should be a trigger for an alert to your First Level Support team.

Example: NPE; external service unavailable; mission critical use case cannot be continued

FATAL

Something terrible has happened, and the system can’t continue to work! Emergency actions are triggered: restarting the service, purging data, sacrificing chicken, …

Example: Database connection lost; disk full; database unavailable ; mission critical use case cannot be continued

Here is a summary regarding levels:

Now, let’s have a look to the content.

Payload

Writing a relevant message is not a simple task.

Concision and meaning

Each logging instruction must be understandable to the developer but even more so to the operator. It’s like a tweet, you are writing not for yourself but for someone else. And like a tweet you have to be extremely concise. Finally, the usefulness must be well established. If the statement has no operational use, it means that it should not exist. This is especially important for the INFO level and above.

Useful event context

Context is critical to figure out what is going on. Without an appropriate context, the meaning could be dramatically wrong:

Each logging statement must provide a useful context in which it occurred.

The context contains a minimum set of information necessary to isolate the action and answer certain questions:

- What do we do now?

- Who does it?

- When do we do it?

- How do we do this?

- Where does the action take place? (server, region…)

Sometimes you need to enrich the context with additional information that requires additional calls. It’s especially true if you are dealing with an error.

Error handling

Perhaps the most important part about log production.

The error must be considered a first-class citizen when we have to report it. The purpose here is to provide all the necessary information that could help diagnose the problem.

- What’s going on?

- What went wrong?

- How bad it turned out to be?

- Can we recover from it?

- In case we can recover, briefly describe how or trigger the recovery action.

Here the context is critical! We must provide the callee context but also create specific context that could help for a pre-diagnosis.

When the error is predictable, giving some suggestion is welcome! Ex: Template file not found. Please check template configuration section in application.yml file.

Security and privacy

It is important to keep in mind when writing a log statement that sensitive information should not be exposed. This is obvious for critical sectors such as health, defence, banking, payments, etc. But today it is also important for all systems that process personal data. It’s even a EU legal requirement thanks to the GDPR .

Your logging framework should provide helpers to mask or tokenize sensitive information.

Case study

Let’s have a look to a real life example extracted from a triple A critical service in production:

class Foo {

private static final Logger LOG = LoggerFactory.getLogger(Foo.class);

@Tracable // <1>

public FooResult doFoo(FooParam param) throws DoFooException, TechnicalException {

Stopwatch stopwatch = Stopwatch.createStarted(); // <2>

try {

LOG.debug("about to do foo with {}", param); // <3>

...

LOG.info("did foo with {} ({})", param, stopwatch.elapsed(MILLISECONDS)); // <4>

} catch (SomeBusinessException ex) { // <5>

LOG.info("failed to do foo with {} because {} ({})",

param, ex.getMessage(), stopwatch.elapsed(MILLISECONDS)

);

throw new DoFooException("failed to do foo", arg, ex);

} catch (TechnicalException ex) { // <6>

ex.addContext("doFoo", param);

LOG.error("error doing foo with {} ({})",

param, stopwatch.elapsed(MILLISECONDS), ex

);

throw ex;

}

}

}

<1>: The @Tracable annotation is a helper to add TRACE level log surrounding the method call. The purpose of this proxy is to trace the method input with parameters and trace the method output with response and time spent inside the method.

Sample output:

[2017-10-24T06:25:06,014][TRACE][o.w.p.s.Foo] >> doFoo() > {id: 123, bar: "hello"}

[2017-10-24T06:25:06,482][TRACE][o.w.p.s.Foo] << doFoo() < {result: "world"} (400ms)

Beware: This kind of helper have to be used with caution.

Blindly trace input and output can harm security and performances.

The toString method of I/O objects have to be carefully implemented.

This kind of helper should only have a debugging purpose and should be manageable.

<2>: Stopwatch is a helper (provided here by Guava) to compute the elapsed time. Its usage is optional (We could rely on log timestamps to compute this duration but that will need a corelation processing).

<3>: This first DEBUG statement is used to provide the pre functional call with the input context. We could have used the INFO level, but in this case the DEBUG level is used because the method is heavily used. So, in order to reduce the disk I/O in production, we chose to use the DEBUG level. It’s one of the reason we need to use the Stopwatch helper. Those assumptions are here to illustrate that the log strategy can depend on NFR.

<4>: This first INFO statement is used to provide the post functional call with the input context. The INFO level is used because useful for service operation. Therefore, concision, understandability and context have to be carefully produced. Time spent into the method is also provided because the method don’t have pre functional INFO log.

The “did foo” message is a poor example but should be carefully choose. This message has to be constant, concise and understandable.

<5>: A business exception occurred. That’s fine. We have to trace the business error in a readable way with the context (arguments, intermediate variables, cause exception message). We have no need to provide the stacktrace. The cause message should contain relevant information about the rejection cause. In order to preserve the context, the cause exception is encapsulated into a business exception with the current context. It allows the caller to deal itself with the error. This is a business error, so that’s fine to use the INFO level.

<6>: Something truly bad happens! An technical exception occurred. That’s not fine. We have to trace the error. In this case we have no clue of what happens and we have no hint to propose. Therefore we are forced to add the stacktrace to the context. The error have to contain every useful information for troubleshooting. The exception is escalated with additional context informations in order to allow the caller to deal with the error (or contribute to the context enrichment).

How?

Format

Logs are by definition unstructured data… really? Well, they shouldn’t!

For instance HTTP access logs are structured and follow standardized text file format such as NCSA Common Log Format (CLF ) or W3C Extended Log Format (ELF ).

Should we do the same for other types of logs? The answer is yes.

About the format, the inline text format is the most widely used. The main reason is that it is human readable and convenient to process using simple UNIX tools. But the paradigm has evolved. Today, our services are more and more “Cloud native”. Access to the production machine is prohibited for safety and common sense reasons. We can continue to use inline text format because there are many powerful tools able to analyze and extract metadata (such as Logstash , FluentD , …..). But we can help by using formats that are more machine friendly like JSON. It is the easiest to parse and fits perfectly for the context of our statement.

Many loggers are able to produce JSON and also provide tools to make it readable by humans (format and colors).

Performances

Writing a trace is not a free operation. In general, you write to disk synchronously. It’s a cost in terms of I/O. If your system is heavily loaded, overall performance may be affected due to I/O saturation. Not surprisingly, you can improve the overall performance of an application by removing all logs.

True Story: I have seen a performance improvement of up to 60% on a project once. It’s just huge.

This is of course undesirable but its a relevant indicator that you should review your logging policy.

Again, concision and usefulness will be your friend.

Another important cost could be memory. Often, you need to build a logging instruction and its context. But if the LEVEL of this module is inactive, we will make unnecessary allocations. That’s why you need to keep this in mind and use your logging tools to limit this problem.

Example:

// Unnecessary allocations

log.debug("Found " + records + " records matching filter: '" + filter + "'");

// Better approach

log.debug("Found {} records matching filter: '{}'", records, filter);

// Event better approach if you add context creation

if (log.isDebugEnabled()) {

...

}

Another mitigation mechanism is the implementation of dynamic sampling. You can choose to log something only because it meets some criteria:

- The user is part of your debugging segment

- The transaction is marked upstream as traceable

- The transaction is too slow (such as “slow query” for databases)

- …

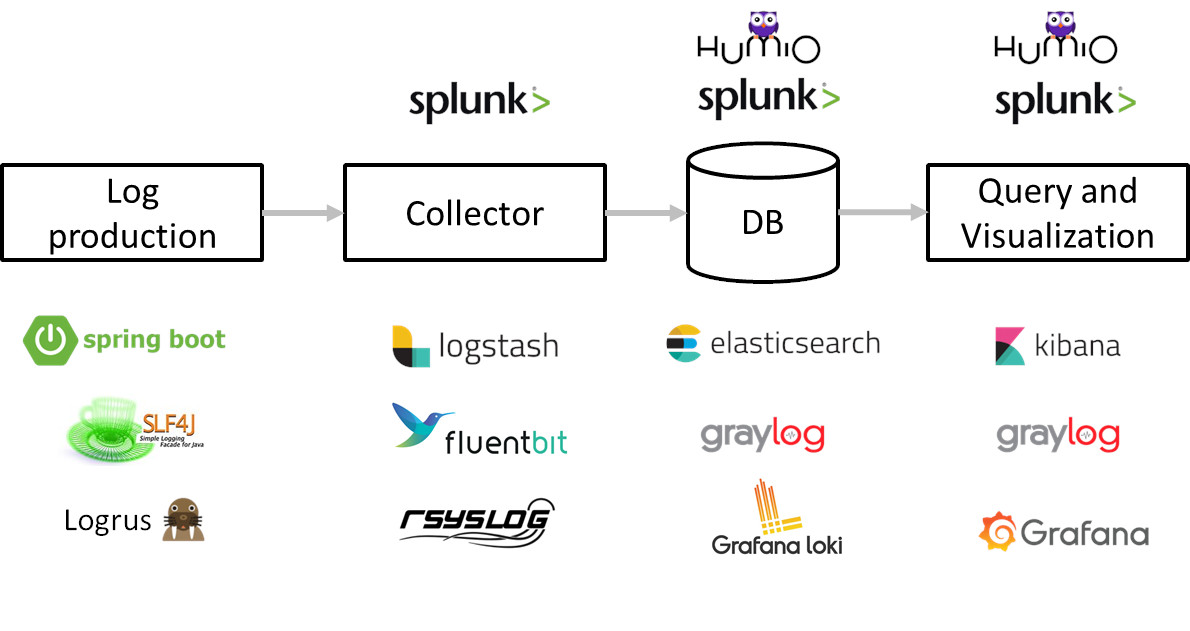

Tooling

All that we have presented previously would be useless without having quality toolbox to handle it all. Tooling is an integral part of your logging management strategy.

We can split the tooling into those categories:

- The Framework used to produce the logs

- The aggregator used to collect, process and transfer logs

- The database used to store, index and provide an advanced query language

- The visualization tool used to produce the dashboard

- The alert system used to trigger alerts based on specific thresholds or conditions

The presentation of each set of tools would deserve a specific article. Implementations are also numerous but some solutions emerge from the batches:

Takeaway

Let’s wrap this paper by a little reminder about logging fundamentals:

Every time you make a logging statement, try to figure out if your logging statements fulfill the following requirements:

- The message level should be appropriate

- The message should be concise and consistant

- The message should be useful and understandable for somebody else

- The context should be preserved

- The message should be easily parsed by an external tool

- The message should not harm the application

- The message should not expose sensitive information

If you do this, your logs will probably become your best ally to run your system. And not a scary elevator door that hides a sea of blood.