Anomaly detection for predictive monitoring

Introduction

Monitoring, the practice of observing systems and determining if they are healthy, is hard and getting harder. There are many reasons for this: we are managing many more systems (servers and applications or services) and much more data than ever before, and we are monitoring them in higher resolution: it is not only possible but desirable to monitor practically everything we can, so we are also monitoring many more signals from these systems than we used to.

Many of us are used to monitor visually by actually watching charts on the computer or on the wall, or using thresholds. Thresholds actually represent one of the main reasons that monitoring is too hard to do effectively. Thresholds, put simply, don not work very well. Setting a threshold on a metric requires a system administrator or DevOps practitioner to make a decision about the correct value to configure.

The problem is that there is no correct value. A static threshold is just that: static. It does not change over time, and by default it is applied uniformly to all servers or systems. But systems are neither similar nor static and each system is different from every other, and even individual systems change, both over the long term, and hour to hour or minute to minute. Threshold-based monitoring is actually a crude form of anomaly detection, and cannot adapt to the system’s unique and changing behavior. It cannot learn what is normal.

Our solution and its benefit

Our anomaly detection solution is based on a dynamic unsupervised-learning approach. It discovers interesting patterns or connections in the data itself, and does this by first identifying and finding what is normal. Once the model is built, the machine-learning process can then spot outliers that fall outside of what is normal. This process is adaptive because it is based on a moving window dynamically capturing the useful aggregated data over time on each individual system.

Our anomaly detection solution is able to monitor systems and applications by looking for spikes or drops in metrics that might indicate a problem. It can find unusual values of metrics in order to surface undetected problems. An example is a server that gets suspiciously busy or idle, or a smaller than expected number of events in an interval of time.

In a world of millions of metrics, it can automatically find changes in an important metric or process, so that humans can investigate and figure out why. It can reduce the need to calibrate or recalibrate thresholds across a variety of different machines or services: when servers, features, and workloads change over time. We do not use static thresholds that throw false alerts at some times of the day or week, and miss problems at other times.

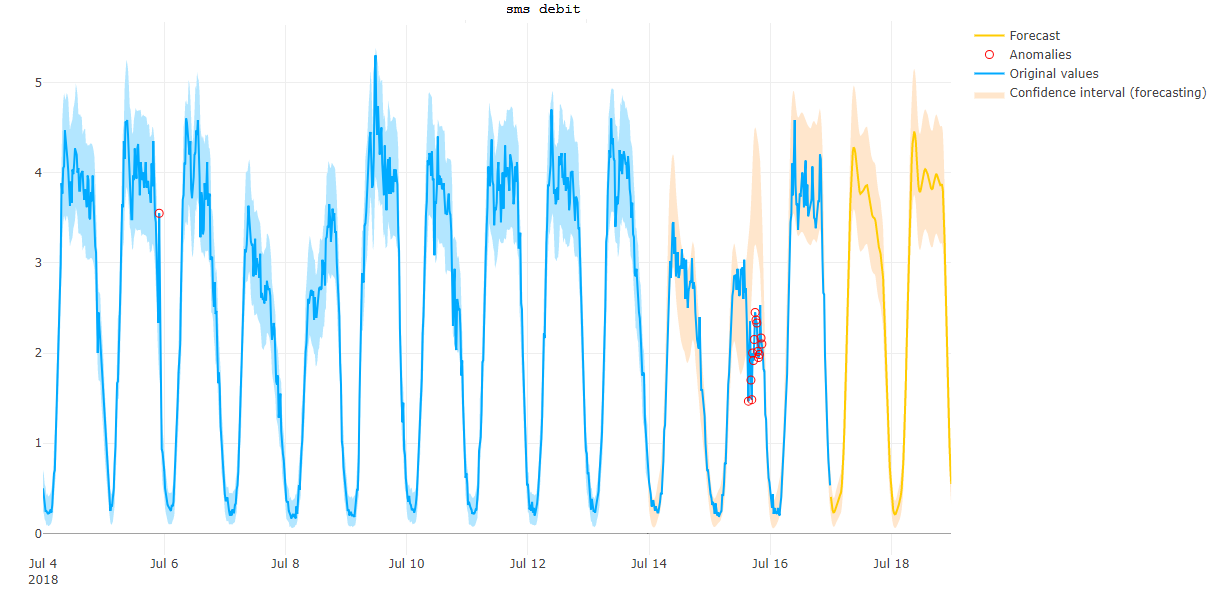

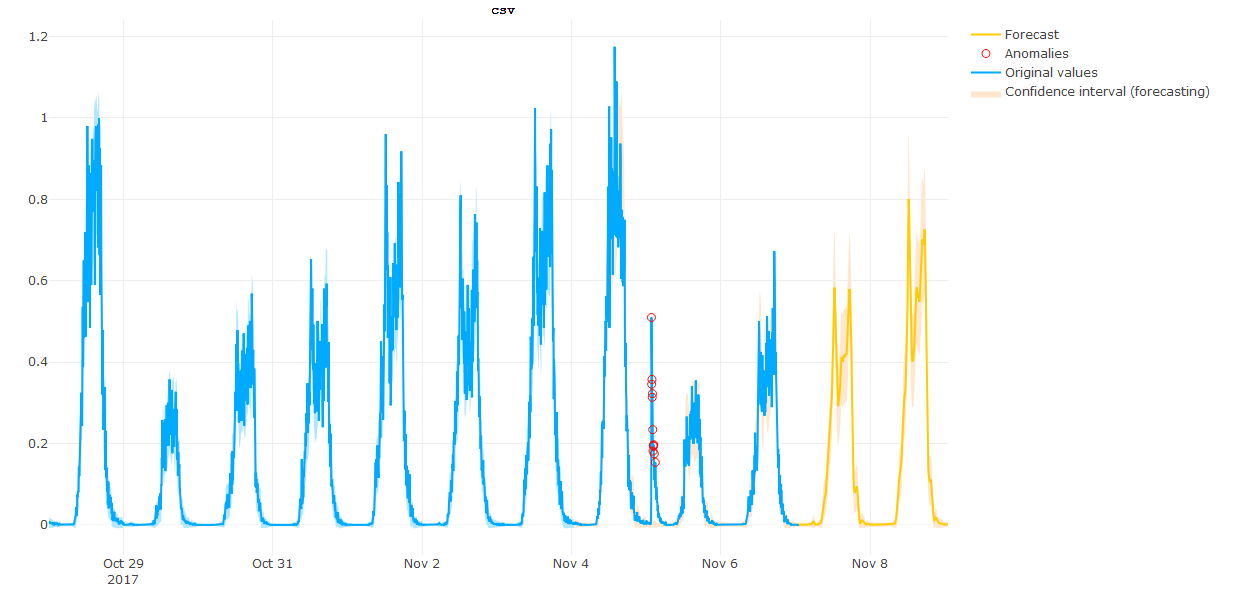

Our anomaly detection solution learns and detects the first weak signals that could reflect disturbance before an issue. It is based on predictions derived from 5 different models which express how the system is expected to work. Finding what is unusual is done by comparing the observed behavior to the model’s prediction. As an example, the figure below shows a suspicious drop detected by our solution, when monitoring the SMS count in our data center. After investigation, we found out that this drop was due to the decrease of attendance because of the Fifa World Cup Final.

With our anomaly detection solution, we got some very interesting results when monitoring financial transactions. One of the work horse applications inside equensWorldline is a set of transaction switches that transport financial messages between acquirers and issuers. The network as a whole can reach over 1000 tps (transactions per second) with individual connections running at over 40 tps. It is a critical part of the application landscape and very important to detect problems early on.

When searching for unusually high numbers of rejected transactions, we found a number of anomalies. These are shown in the next figure. These anomalies occur when the traffic is very low during the night which is very hard to find by setting just thresholds. After a check with the internal department that manages the switching applications of equensWorldline, we found out the root cause of the problem.

The Anomaly detection toolkit and its democratization

Our main objective is to think about how we can leverage predictive monitoring inside equensWorldline and Worldline and how we can make data science knowledge accessible to all. This is the reason that we have built a user friendly Web application to assist devOps participants or application managers in their daily work. The Web application is aligned with three major goals that we have identified:

- Able to analyze combined metrics such as operational & business metrics.

- Able to encapsulate data science knowledge in accessible, digestible building blocks and in an understandable way

- Able to be used in a self-service mode close to business and operational expectations.

The architecture

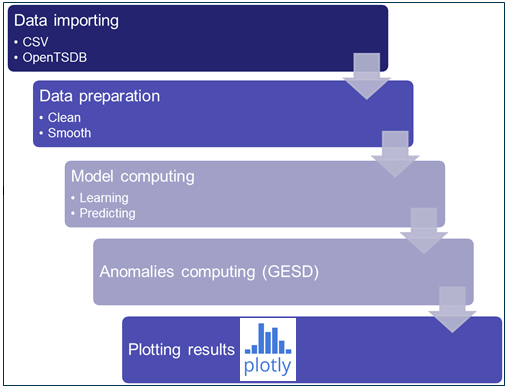

The Web application allows the user to load one or several metrics and to get the results of our analysis on interactive plots. The analysis is split into the following steps:

- The data is imported from CSV files or from an OpenTSDB database into the R environment,

- The data is cleaned (to remove extreme values) and smoothed (to reduce the noise) before the modelling step

- The model learns the normal behaviour and predicts the normal values of the metric,

- The difference between the forecast values and the true values of the metrics are compared. Extreme differences are considered as abnormal,

- The anomalies are plotted as results of the analysis

During the third step, the user can choose a model and at the same time set a sensitivity parameter to filter more or less anomalies. To refine the analysis, the user can select a convenient historical window (period without breakouts or holidays) to train the models on what he considers being the normal behaviour. The user can save these trained models to reuse them for forecasting the expected normal behaviour. This step is not mandatory but is available to anyone who needs a customized analysis.

Easy to install

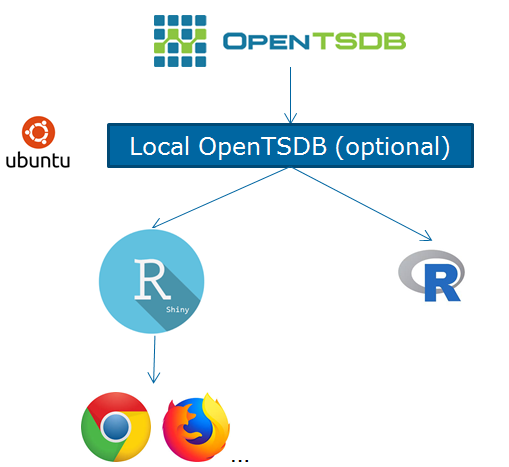

The framework of our toolkit is composed of:

- Time series based database, say OpenTSDB in our case, to store the metrics. Even if the CSV option is available, OpenTSDB is much more convenient for our work,

- A Linux virtual machine to mount a local OpenTSDB or/and to run our application,

- R statistical software to run our algorithm and its component for the web application (R shiny),

- An internet browser to use the web application.

The installation and build of the application is automated and most of the steps to deploy it on a virtual machine are easy for beginners.

Results

Focus on a real issue

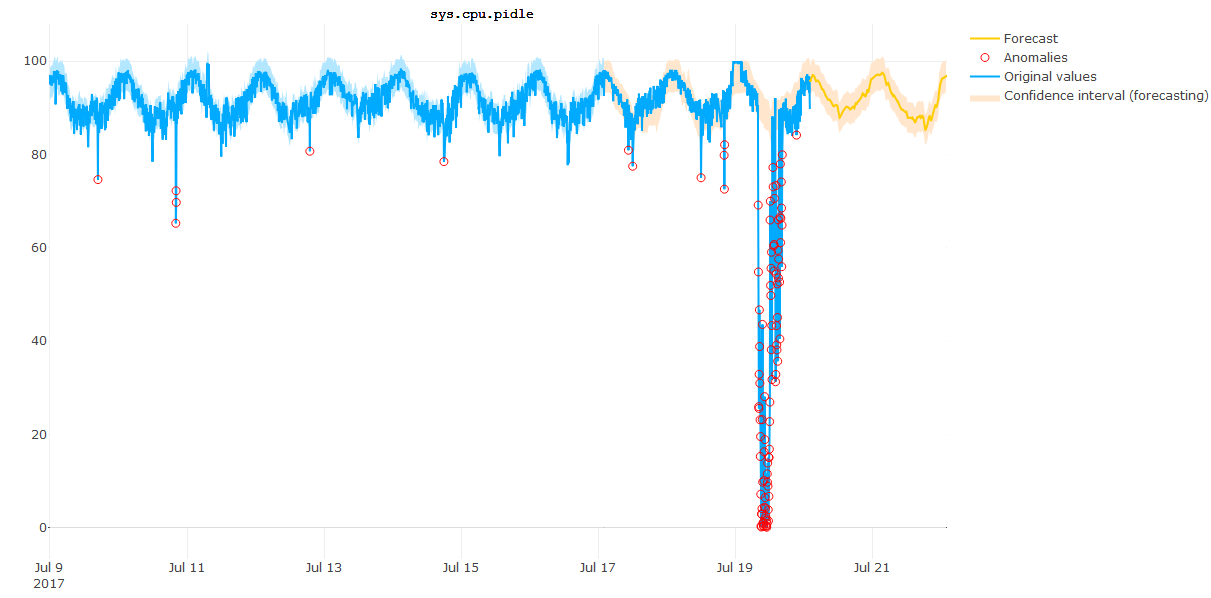

Here is one metric we have tested with the toolkit: a CPU metric from last year with a major breakout. As you can see on the next picture, we detect some potential weak signals before the major failure causing the issue. Then about the failure itself, what’s interesting is that we detect it at early stage. The last “normal” signal arrives at 7:50 and the start of the incident is detected at 7:55.

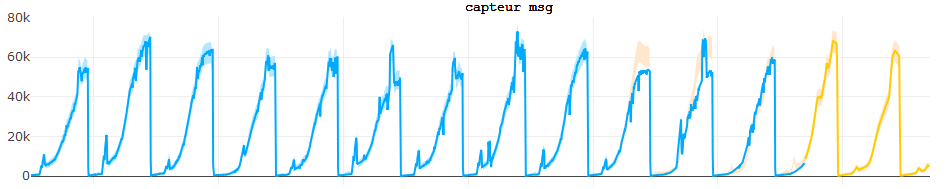

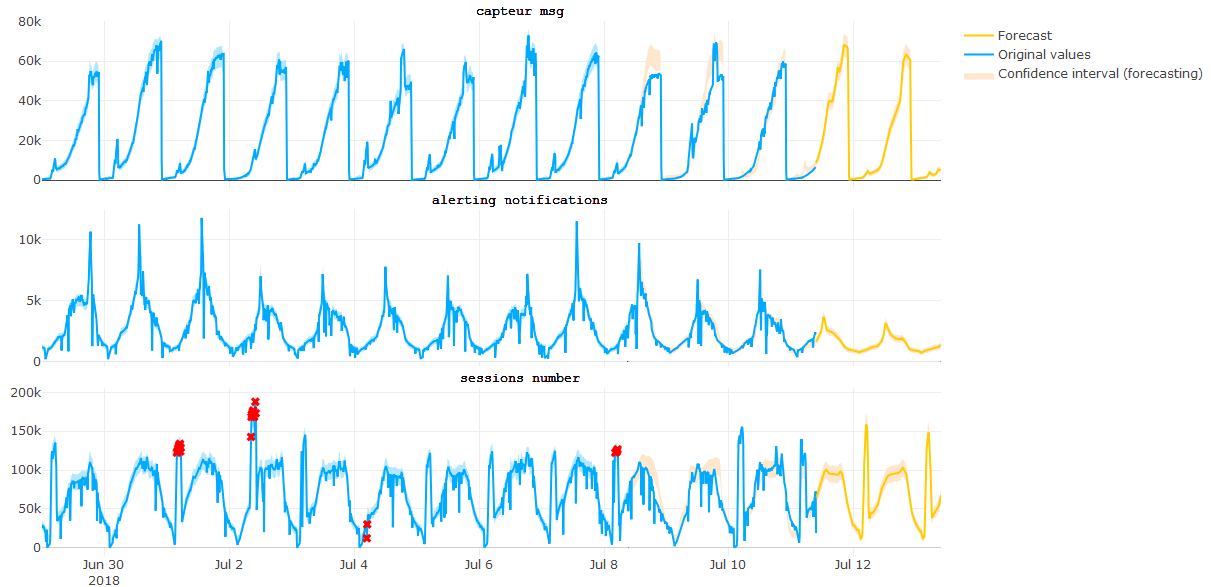

Multiple anomaly detection

Usually, when monitoring your own application, we track several metrics. Our toolkit enables you to visualize anomalies found on multiple metrics at the same time. As illustrated in the next figure, our toolkit offers the possibility to keep an eye on different KPIs to measure several flows of an application such as the session number, the number of notifications and the number of messages to warn against unusual peaks or drops.

Conclusion

In conclusion, our application enables to skip defining rules, specifying thresholds, or manually building out statistical models and makes it easy to start identifying anomalies. Just define your own data you are interested in analyzing, either in a CSV file format or in an OpenTSDB database. Specify the metric (e.g. the requests per second) and any other properties that might be important (server, IP, username), and that is it.

Acknowledgement

Many thanks to Worldliners who follow us, have already installed it and given their feedback.