JAX DevOps London: quick review of this event

}

}

JAX DevOps 2018 ended mid-April. It took place in London and it was the first time I attended this workshop. Let’s talk about the different elements I noticed during this large-scale event.

First of all, I was very glad to be at this convention. It was really worth it! Compared to other conventions that I could already attend, this one was smaller and more user-friendly. It allowed more easily to exchange and to communicate with the speakers and the other attendees.

Brief description:

- First launched in Germany in 2001, and has had since then successful runs in Europe, North America, India, Singapore & Indonesia.

- Two-day conferences for software experts but also with JAX Finance, an opportunity to talk about non-technical subjects (like blockchain disposal and future usage, leadership and environment, etc.)

- Two-day workshops to implement or test a solution driven by Experts in the domain

- Featured in-depth knowledge about the latest technologies and methodologies for lean businesses.

- We certainly talked about DevOps for sure, but also Agile, delivery cycles and methodologies.

- 60+ Workshops, sessions, and keynotes

- 40+ International speakers and industry experts

- Up to three sessions at a time, well enough to have no regrets for missing one of them

- And of course everything needed to start pretty well the day!

- Some sponsors like Jetbrains, Axway, Pagerduty or Datadog. The last one presented their real-time performance monitoring offer, a centralized platform alimented by Go agents to give real-time visibility, application performance and centralize logs. Very interesting for those who want a ready-to-use solution! https://www.datadoghq.com

First Keynote; structure of DevOps revolution

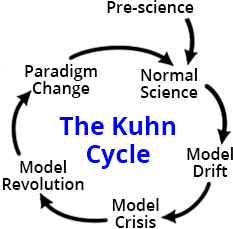

Mike Long presented to us a parallel between the DevOps initiative and the scientific method. DevOps will be the disruptive paradigm shift.

}

}

The success of this era depends on new tools: automated tests, continuous integration, automated deployment, cloud provisioning, rollbacks, breaking down of silos. The basic model revolution that is conform to the Agile Manifesto is that the “highest priority is to satisfy the customer through early and continuous delivery of valuable software”.

But tools are not enough and data is also necessary. I liked the comparison he made about data that we have learned with the progress of medicine: during decades, the only way to have information about your disease was to wait for your death and then have your body analized. Later, we were able to recover data and its analysis saved others’ lives. Thankfully, data nowadays is easier to capture and just needs to be analyzed in time to be ready to use.

One of the future pieces of data that will be important to obtain is the number of deployments and the time spent to deploy.

See: https://jaxenter.com/jax-devops-interview-mike-long-143187.html

Ref: http://www.thwink.org/sustain/glossary/KuhnCycle.htm

Second Keynote; Beyond ico: create value with blockchain.

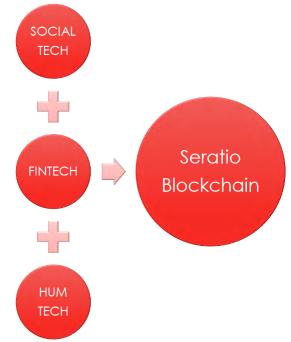

Barbara Mellish introduces the SEO - Social Earning Ratio - that aims to capture and articulate non-financial intangible value.

}

}

A view of what the future may be with the blockchain technology and the crypto currencies. Not enough yet to counterbalance the £995 billion of bank transactions a day but maybe the next transversal economy to pay attention to.

https://blog.worldline.tech//images/post/SEO-measure.jpg does not existAfter the Internet of Things, Blockchain will probably spread profusely The Internet of Value. All the value can be transacted by means of the Seratio Token & Microshares.

See: https://www.seratio.com/home

https://www.seratio-coins.world/index-fr.html

CI/CD for humans

Jackie Balzer presented their Moonbeam internal solution to construct a build queue system and to allow to safely make updates.

She explains her process to develop and deploy apps step by step:

- Selection of the service to deploy

- Queuing of deployment demands

- Pull requests selection (to grab only the opened requests and ready for production - with dependencies information)

- Deploy by step and by environment (Build/Dev/Stage/Prod), with a check-list on each phase…

- …until the push on master and therefore, the delivery on production

A great effort of what can be a delivery in production in a full automatized way!

See: http://jeanniehuangdesigns.com/adobe-build-queue-system/

Effective leadership in an agile DevOps environment

Michiel Rook animated an interesting conference, talking about the place of leader in Agile/DevOps environments.

DevOps encourages self-organization in teams and the question we tried to answer during that session was: is a leader always necessary for team to work in DevOps mode?

We approached each others’ psychology and the way each of us can react concerning the different activities during a project.

The general answer was that we need a manner to go all the same way. Project managers must find their place by knowing how to correctly adjust their positioning in relation to the different profiles they work with.

“Leadership is action, not position.” - DONALD H. MC GANNON

See: Effective_leadership_in_Agile_DevOps_environments_Michiel_Rook.pdf

Infrastructure as Code: Build Pipelines with Docker and Terraform

Kai Tödter, a Siemens Key expert, showed how to automate the creation of a build pipeline with Docker-based infrastructure, Jenkins, SonarQube and Artifactory. With the key principle of DevOps : everything as code, Kai introduced the Infra as code and presented a live demo of a complete deployment on AWS using only configured files.

Main advantages of this kind of solution:

- Everything is maintained as code and centralized

- Everything is planned and can be previewed before applying

- Everything is replayable no matter how many servers in place

The demo is accessible at https://github.com/toedter/cd-pipeline

Lessons Learned from Providing Official Docker Images

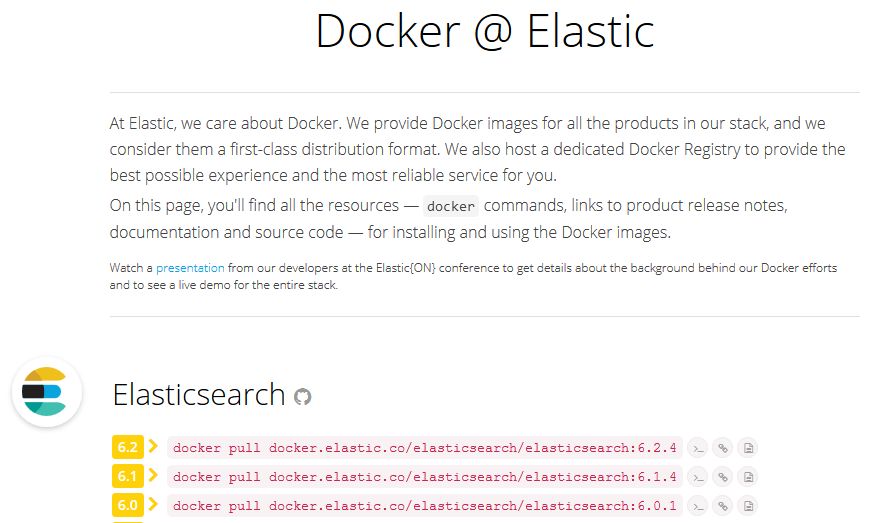

Philipp Krenn made an overview of elastic stack coupling with Docker.

The object was not to make a presentation of the new things coming with ElasticSearch 6.0 (if it interests you, go check the info - in French - at https://blog.worldline.tech/2018/04/11/elastic-on-tour-paris-2018.html

), but to indicate the future of Elastic with Docker images.

First of all, pay attention to the Official Docker hub to take into account. Don’t do docker pull elasticsearch as it is related to a deprecated and non-official image! Prefer using the https://www.docker.elastic.co/

(or https://hub.docker.com/r/elastic/elasticsearch/

) site to get the correct Elastic images to choose from. Elastic is interested in collecting stats about the different versions used for the images. So don’t hesitate to use that hub!

}

}

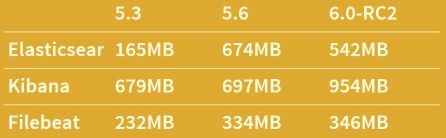

After that, the basic idea was to discuss about the future and what can be made with images:

Smaller images? Because size does not only matter!

}

}Multiple jdk versions?

Multiple base images?

Windows compatibility?

Add image version to get a release policy?

…

Logging and Tracing for your Microservices – Log4j, Zipkin and Spring Sleuth

Alexander Schwartz introduces a typical use case of log management in microservices, with the typical problem to follow errors and to be able to localize the points of failure. slf4j and log4j are a good way to have logs in a service but not enough when multiple services interact with each other. So using context (with MDC) and being able to identify the path of a call is ideal to debug microservices.

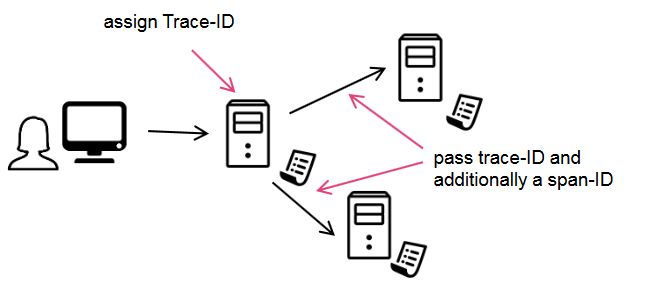

And that’s the idea of Spring Sleuth! By passing a trace-ID during all the different calls, debugging is a piece of cake, just add the spring-sleuth dependency in your Maven pom file.

}

}

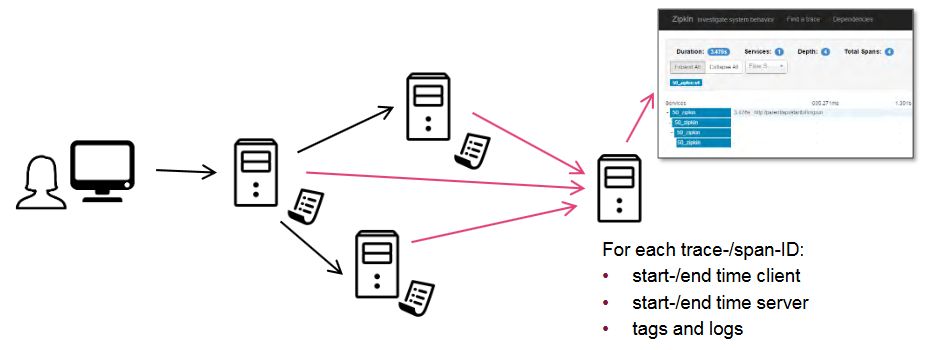

And use Zipkin as a central store to analyze errors, timing issues and dependencies, even in production (you can adjust a percentage of requests to trace)!

}

}

}

}

As a conclusion

JAX DevOps (& Finance) brought a lot of interesting ideas, methods and tools to use! I will not compare it to other conferences on quite the same topics. I will just say that JAX DevOps is a good place to have information:

- Combining 2 days of workshops with 2 days of conferences allowed to select the days which I interested in and allowed me to learn info not only in a passive way

- Having a limited number of sessions at the same time did not leave me any regrets on missing one session for another. It also limits the number of attendees, which avoids the mass effect to access to conferences. These were finally conferences where I can breathe and take time to talk with speakers or other attendees!