JavaOne 2017 - CQRS, Event sourcing and Sagas

Yet another feedback on JavaOne 2017. This article is following these already published articles:

- JavaOne 2017 - Java 9, Jigsaw, Java EE 8 and EE4J

- JavaOne 2017 - Java 8, Three Years Ago. Functional & Reactive Programming

JavaOne is far more than only core Java and Java EE, it embraces the full java ecosystem and lots of talks are even larger including for example architectural and organizational topics. This article is about an interesting set of talks about data management and microservices.

Microservices are now more than a trend, they are becoming a mainstreaming way to develop large applications. Just searching ‘microservice’ in the JavaOne 2017 session catalog will lead you to dozens of sessions. And indeed they allow many proven benefits like flexibility, scalability, etc.

But the real challenge with microservices is to handle properly data in the case of complex transactional processing. Scaling large data processing in a transactional environment is still a hard challenge and microservices are no silver bullets.

Anyway, through various talks from JavaOne 2017 on this topic a broad consensus seems to be reached on applicable architecture patterns to solve this issue. I said ‘architecture patterns’ and not ‘frameworks’ or ‘solutions’ on purpose: detailed implementation still differs from on case to another and many speakers admit they had to build their own framework to solve their situation. So SQL, NoSQL, messaging solutions, etc. are more a question of taste and constraints specific to the project. The good news is that architecture still matters!

Best practices to tear down your data monolith

Forget about 2PC (2 phase commits)

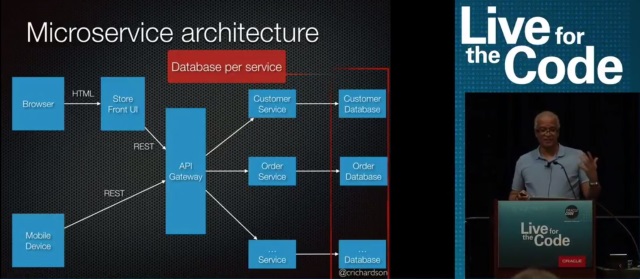

In the microservice world, a service = a database. Not meaning a complete database instance, a scheme is often sufficient. But in all cases it implies that you cannot rely on traditional transactions offered by a database when you try to do something implying more than one microservice.

Image source: talk 1

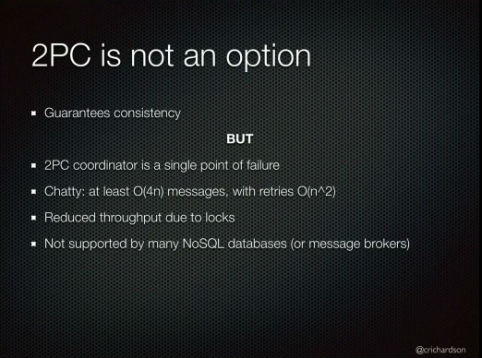

Of course distributed transactions are here for a while but apart from the complexity of having reliable distributed transactions, the main drawback is that it will not scale. This is primarily due to the fact that it is a blocking protocol (applications have to wait for its completion) and that it requires many messages to complete a transaction. 2PC may be acceptable at small scale but is clearly prohibited for highly scalable applications.

Image source: talk 1

Accept eventual consistency

After decades of strong consistency paradigm, this one is still hard to swallow. Even if it is simply a consequence of CAP (Consistency Availibility Consistency) theorem for distributed systems (and remember you already decided to drop 2 phase commits for that reason), we are still living in the “illusion of absolute now”.

A common assumption is: “if I modify a business object somewhere, every microservice should have instantly access to that modification…” Ok, it does not really work like this. There is always a delay between when you do something and when anyone else gets what you’ve done. It can be days, seconds or milliseconds, it does not change the fact that during this delay something might go wrong and consistency will not be guaranteed instantly but only eventually (=finally when reconciling all the things done).

Image source: talk 5

This sounds like a big problem, but in real-life vast majority of what we do is doomed to eventual consistency… and guess what? In many businesses we do not really care! What we expect is: the process to be reliable (avoid loss); to obtain feedback in a reasonable delay; and finally that failures will be compensated in the right way.

We have all already experienced eventual consistency. For instance if you placed an order on your favorite blackfriday offer but it can’t be delivered for any reason: you will get a refund instead. So, even if your transaction was already accepted, a reconciliation process will rollback what was done by a compensating event.

It works in many business domains (but maybe do not do that if your job is to launch a rocket, strong consistency is still important sometimes).

Build Sagas

Here is where Sagas appear in the game. You accepted eventual consistency and the lack of global transactions; it does not mean that you will accept unpredictable consistency.

The idea is simple: you are chaining a list of calls to microservices and you expect eventual consistency (at the end the request should be done or compensated) and to reduce potential failure you will have to set-up something like an event based communication between microservices. Each microservice has to manage incoming messages, its own database updates and outgoing messages. Queuing events will then allow easily to stop/start microservices without harming ongoing processes.

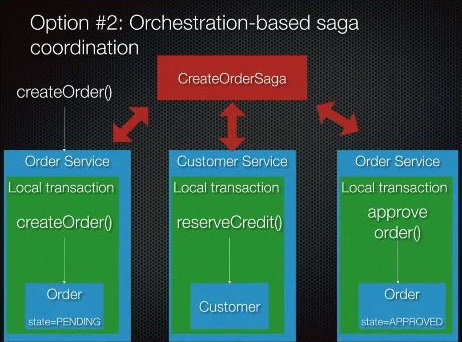

There is more than one way to implement Sagas, a preferred one is to put a lightweight message routing service in charge of the orchestration of the global process you want to achieve.

Image source: talk 1

But remember that you do not have automatic rollback with Sagas: if something went wrong, you would need a compensation process. So you have to define all the needed compensation processes in your system. By the way, sometimes compensation processes will be fairly simple like doing nothing or simply cancelling the previous order.

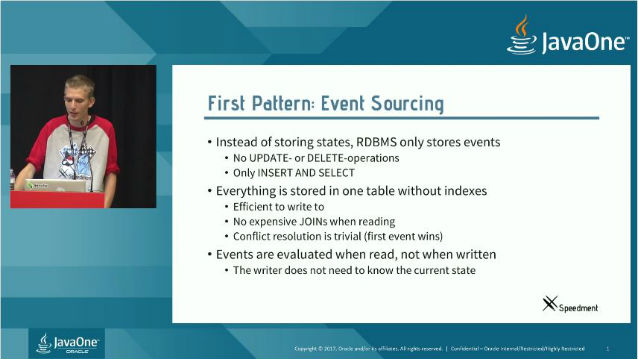

Try event sourcing

The event sourcing principle is quite simple: it is the way your banking account is managed.

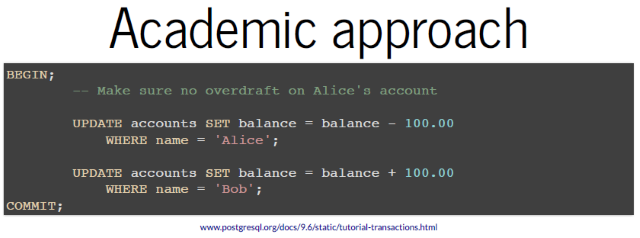

Sometimes people are using banking examples to justify the need of transactions to maintain accounts consistency just like this:

Image source: talk 6

But banking is far more complex and bank accounts are not all located inside a single big database of a global bank. What is really stored for a transaction is more an event like this:

Image source: talk 6

Therefore your account state is the result of the computation of a collection of events. When a new event is added to your account, you cannot modify it nor delete it. If you want to correct something the only possibility is to write a new event that will compensate the corresponding event. And an important thing is that there will always be a delay to wait for proper events propagation.

Event sourcing can be seen as a replacement to traditional CRUD. But in fact it is not really the case, it is more linked to how you will store the data resulting from your CRUD operations. Instead of updating ‘customer1’ object with ‘address1’ you will store an event for customer1 of type ‘adress changed’ with data ‘adress1’. When you want to read ‘customer1’ address you will have to look-up for the latest ‘adress changed’ event related to ‘customer1’ to get the information.

Image source: talk 5

There are many benefits:

- Writes are faster: when you want to update an object, you only have to store the update event. You do not need to read the object before changing its values and storing the new object state.

- You keep track of any change and are able to read a state from the past (what was the address at that date?)

- It fits well with Sagas: microservices are already generating events to indicate which part of the process they already accomplished and to trigger next steps of the process. When you add event-sourcing to Sagas, you end up with a reliable log of everything that happened in your system. Moreover you can decide that this log will be your primary database.

This is awesome but event-sourcing comes with its own problematics:

Image source: talk 4

- Compensating events are not always easy – even just to understand. You have to properly manage complex cases like: reverting an event (

adress1was not thecustomer1new address -> creation of an event to reset to an old address); dealing with merge (customer1andcustomer2are the same person how to merge events?) and reverting merge (oops, that wascustomer1andcustomer3instead), etc. - You will still need aggregated views of your events (you cannot afford to lookup information in the log every time). That’s why event-sourcing is not used alone most of the time.

- And maybe the most important thing: don’t forget that events have to be considered as a part of your APIs.

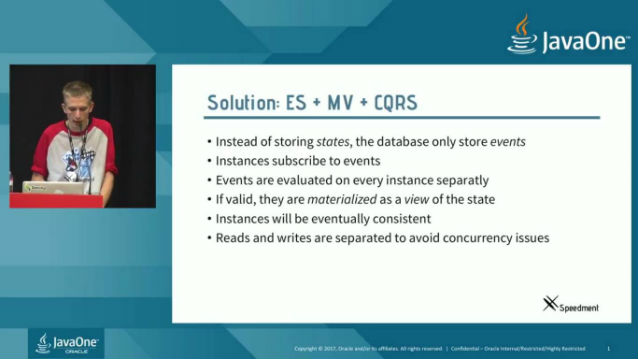

Finish with a pinch of CQRS and materialized views

CQRS stands for ‘Command Query Responsibility Segregation’.

It is another simple principle: basically it means that you should split your write (command) and you read (query) operations in separated data stores. It is based on the assumption that you will have many reads and fewer writes (which is often the case). More than one data store means longer delays and eventual consistency, but remember you already accepted it for many good reasons.

Taken alone CQRS is interesting but if you group Sagas, event-sourcing and CQRS you have a pretty powerfull pattern because CQRS will add the possibility to build asynchronously aggregated views (sometimes called Materialized View or Snapshot) on top of your events.

Image source: talk 3

Coming back to banking: when you display your bank account you are experiencing Event Sourcing but also CQRS: you get instantly a page with the total amount on your account and a list of latest transactions which is basically an aggregated view of the real transaction log (which should look like an event sourcing trail).

Oh! and yes, the total amount that you see on your account page is eventually consistent, you may not have all that money finally…

Talks

All the illustrations of this article are quotations of these great JavaOne 2017 sessions:

- 1 - Video: ACID Is So Yesterday: Maintaining Data Consistency with Sagas by Chris Richardson

- 2 - Video: Event Sourcing, Distributed Systems, and CQRS with Java EE by Sebastian Daschner

- 3 - Video: Three Microservice Patterns to Tear Down Your Monoliths by Per Minborg and Emil Forslund

- 4 - Slides: Event Sourcing with JVM Languages by Rahul Somasunderam

- 5 - Slides: Managing Consistency, State, and Identity in Distributed Microservices by Duncan DeVore

- 6 - Slides: Asynchronous by Default, Synchronous When Necessary by Tomasz Nurkiewicz

- 7 - Free book: Migrating to Microservice Databases by Edson Yanaga

Conclusion

A first thought before jumping to all these concepts: all these patterns are appealing but littlest deviations can turn them into hell and implementing large scale transactional processing remains hard. On the other hand if you keep a data monolith, your application will be doomed to limited scale and throughput. You have to think carefully about the real needs of your system.

Lots of technical solutions are already available to achieve all these patterns but for the moment – and it might change in the future - my feeling is that there is no mainstream framework to solve it globally. My advice would be that if you live with a monolith (service or data) and that it fits your need, you should not try to change for this kind of patterns. But if you have lots of microservices and high volume transactional processing, you certainly cannot avoid to go this way.